Theorem. Let $n$ be a positive integer and let $A$ be an $n\times n$ matrix. Then, $A$ is symmetric if and only if it is orthogonally diagonalizable.

-

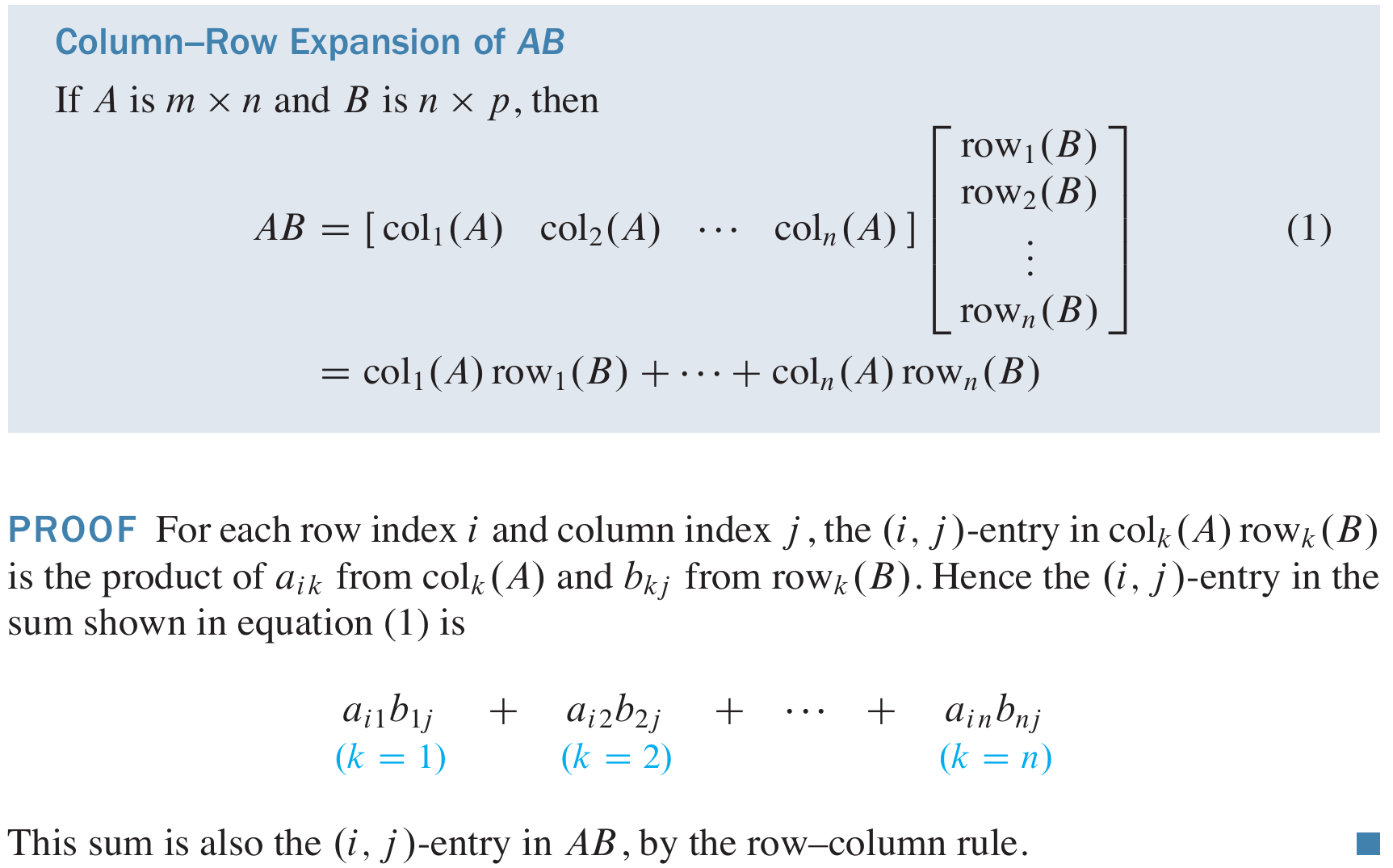

Today I want to present a consequence of the Singular Value Decomposition of a matrix that is not presented in the textbook. But, before that I want to recall a matrix factorization presented in Section 2.5 of the textbook in Theorem 10: Column-Row Expansion of a Matrix Product.

This proof is concise. To make it easier for you to internalize it, I will elaborate on it below.

This proof is concise. To make it easier for you to internalize it, I will elaborate on it below.

- Let us first introduce the concept of the outer product of two vectors. Let \(m, n \in \mathbb{N}\), and let \(\mathbf{x} \in \mathbb{R}^m\) and \(\mathbf{y} \in \mathbb{R}^n\). That is, \[ \mathbf{x} = \begin{bmatrix} x_1 \\ \vdots \\ x_m \end{bmatrix}, \quad \mathbf{y} = \begin{bmatrix} y_1 \\ \vdots \\ y_n \end{bmatrix}, \quad \text{where} \quad x_1, \ldots, x_m, y_1, \ldots, y_n \in \mathbb{R}. \] Recall that \(\mathbf{x}\) is an \(m \times 1\) matrix, while \(\mathbf{y}^\top\) is a \(1 \times n\) matrix, making the product \(\mathbf{x} \mathbf{y}^\top\) a well-defined \(m \times n\) matrix. The \(m \times n\) matrix \(\mathbf{x} \mathbf{y}^\top\) is called the outer product of the vectors \(\mathbf{x}\) and \(\mathbf{y}\). Specifically, \[ \mathbf{x} \mathbf{y}^\top = \mathbf{x} \bigl[ y_1 \ \cdots \ y_n \bigr] = \bigl[ y_1 \mathbf{x} \ \cdots \ y_n \mathbf{x} \bigr] = \begin{bmatrix} x_1 y_1 & \cdots & x_1 y_n \\ \vdots & \ddots & \vdots \\ x_m y_1 & \cdots & x_m y_n \end{bmatrix} = \begin{bmatrix} x_1 \mathbf{y}^\top \\ \vdots \\ x_m \mathbf{y}^\top \end{bmatrix}. \]

- Assume that the vectors \(\mathbf{x}\) and \(\mathbf{y}\) are nonzero vectors. Then we have \[ \operatorname{Col}\bigl(\mathbf{x} \mathbf{y}^\top\bigr) = \operatorname{Span}\{\mathbf{x}\} \quad \text{and} \quad \operatorname{Row}\bigl(\mathbf{x} \mathbf{y}^\top\bigr) = \operatorname{Span}\{\mathbf{y}\}. \] Consequently, the rank of \(\mathbf{x} \mathbf{y}^\top\) is \(1\). Conversely, every matrix of rank \(1\) is an outer product.

- In this item I will give a slightly different proof of the Column-Row Expansion of a Matrix Product Theorem. Let \(l,m,n \in \mathbb{N}\), let \(A\) be an \(m\times l\) matrix and let \(B\) be an \(l\times n\) matrix. Let \(i\in \{1,\ldots,m\}\), \(k\in\{1,\ldots,l\}\) and \(j\in\{1,\ldots,n\}\). Denote by \(a_{ik}\) the entry in \(i\)-th row and \(k\)-th column of \(A\) and denote by \(b_{kj}\) the entry in \(k\)-th row and \(j\)-th column of \(B\). Set \[ \operatorname{col}_k(A) = \mathbf{a}_k = \begin{bmatrix} a_{1k} \\ \vdots \\ a_{mk} \end{bmatrix}, \quad \operatorname{col}_j(B) = \begin{bmatrix} b_{1j} \\ \vdots \\ b_{lj} \end{bmatrix}, \quad \operatorname{row}_k(B) = \Bigl[ b_{k1} \ \cdots \ b_{kn} \Bigr]. \] Then \begin{align*} AB & = A \Bigl[ \operatorname{col}_1(B) \ \cdots \ \operatorname{col}_n(B) \Bigr] \\[7pt] & = \Bigl[ A \operatorname{col}_1(B) \ \cdots \ A \operatorname{col}_n(B) \Bigr] \\[7pt] & = \left[ A \begin{bmatrix} b_{11} \\ \vdots \\ b_{l1} \end{bmatrix} \ \phantom{\begin{array}{c} \vdots \\ \vdots \\ \vdots \end{array}}\mkern-10mu \cdots \ \ A \begin{bmatrix} b_{1n} \\ \vdots \\ b_{ln} \end{bmatrix} \right] \\[7pt] & = \Biggl[ \sum_{k=1}^l b_{k1} \mathbf{a}_k \ \ \cdots \ \ \sum_{k=1}^l b_{kn} \mathbf{a}_k \Biggr] \\[7pt] & =\sum_{k=1}^l \biggl[ b_{k1} \mathbf{a}_k \ \ \cdots \ \ b_{kn} \mathbf{a}_k \biggr] \\[7pt] & =\sum_{k=1}^l \mathbf{a}_k \Bigl[ b_{k1} \ \ \cdots \ \ b_{kn} \Bigr] \\[7pt] & =\sum_{k=1}^l \operatorname{col}_k(A) \operatorname{row}_k(B) \\[7pt] & = \operatorname{col}_1(A) \operatorname{row}_1(B) + \cdots + \operatorname{col}_l(A) \operatorname{row}_l(B). \end{align*}

- In the sum \[ \operatorname{col}_1(A) \operatorname{row}_1(B) + \cdots + \operatorname{col}_l(A) \operatorname{row}_l(B) \] we can drop the terms which equal to the zero matrix. In this way we have written the product \(AB\) as a sum of rank-one matrices.

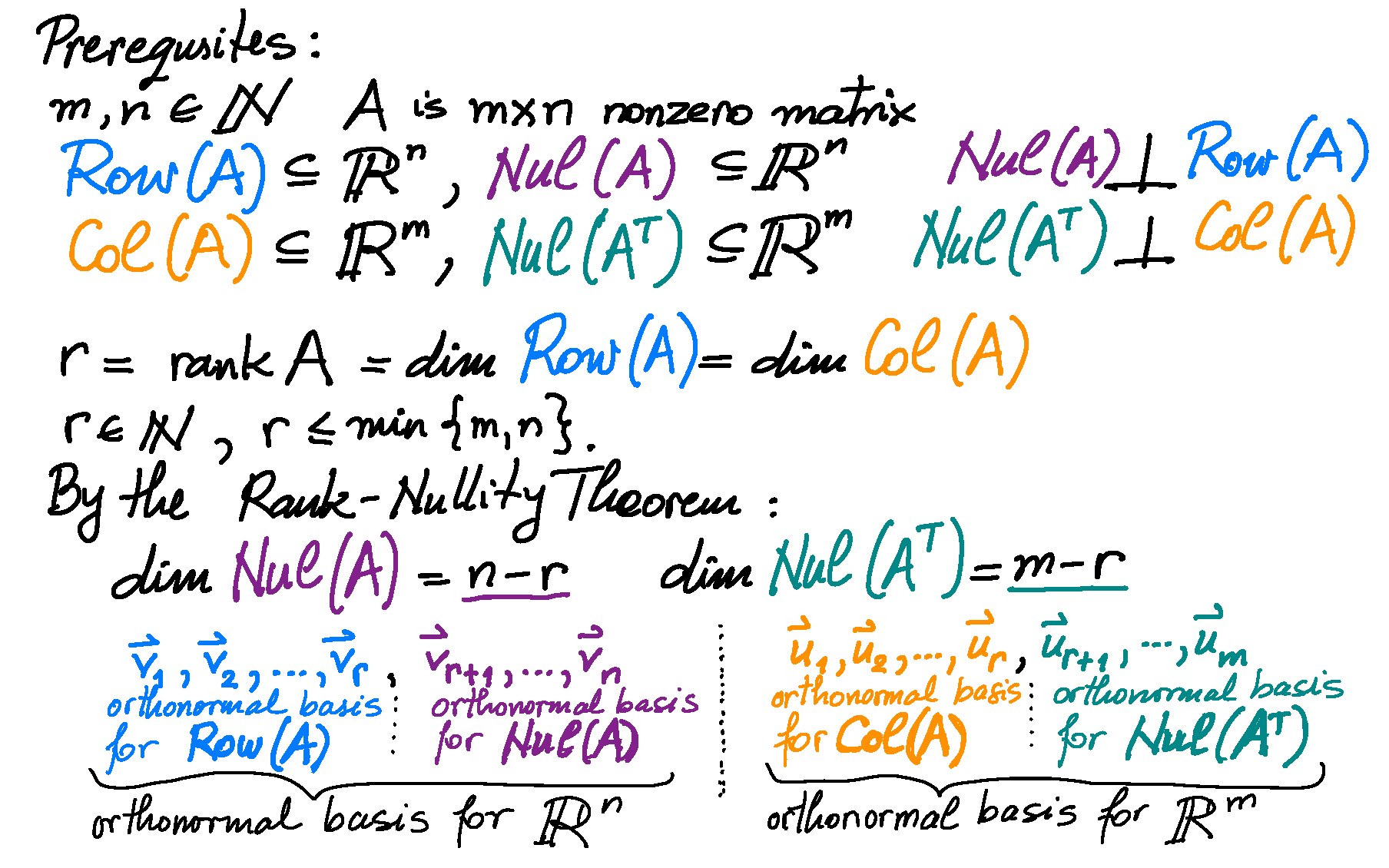

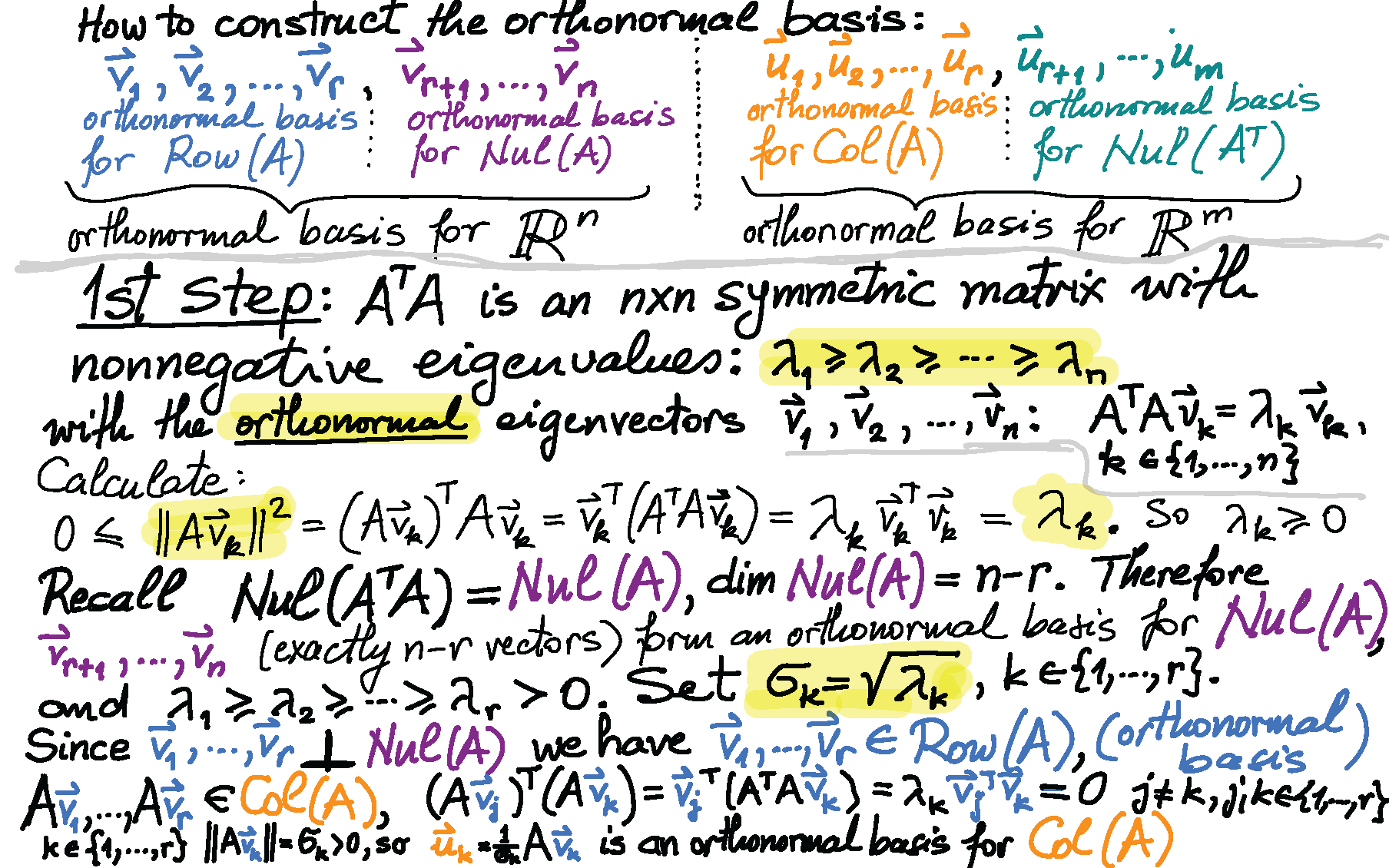

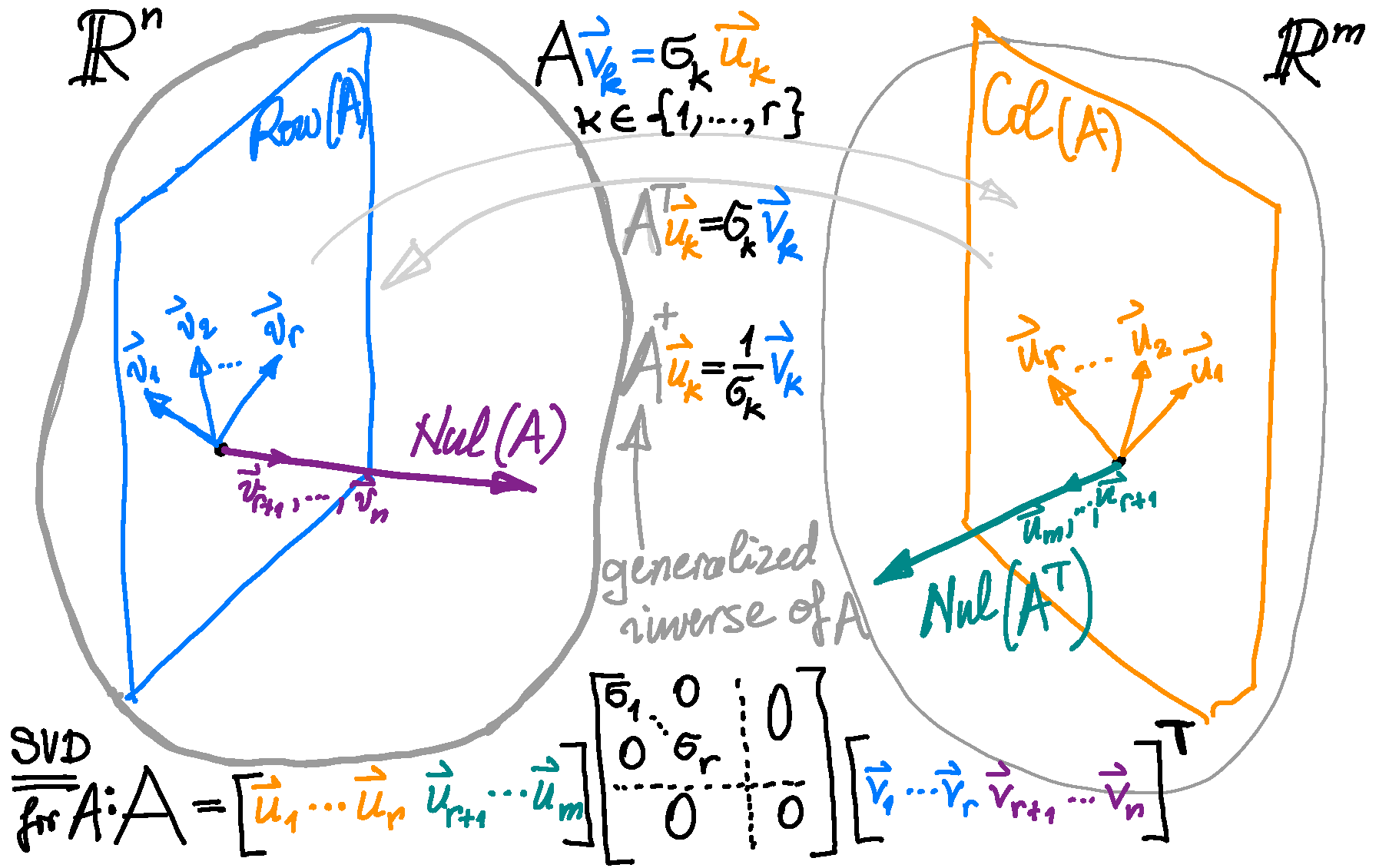

- Next we implement the column-row expansion of a product of two matrices to a reduced singular value decomposition of a nonzero \(m\times n\) matrix \(A\) of rank \(r \in \bigl\{1,\ldots,\min\{m,n\}\bigr\}\) which we studied yesterday: \begin{align*} A & = U_r D_r V_r^\top \\ & = \Bigl[ \mathbf{u}_1 \ \cdots \ \mathbf{u}_r \Bigr] \begin{bmatrix} \sigma_1 & \cdots & 0 \\ \vdots & \ddots & \vdots \\ 0 & \cdots & \sigma_r \end{bmatrix} \begin{bmatrix} \mathbf{v}_1^\top \\ \vdots \\ \mathbf{v}_r^\top \end{bmatrix} \\ & = \Bigl[ \sigma_1 \mathbf{u}_1 \ \cdots \ \sigma_1 \mathbf{u}_r \Bigr] \begin{bmatrix} \mathbf{v}_1^\top \\ \vdots \\ \mathbf{v}_r^\top \end{bmatrix} \\ & = \sigma_1 \mathbf{u}_1 \mathbf{v}_1^\top + \cdots + \sigma_r \mathbf{u}_r \mathbf{v}_r^\top. \end{align*} Here \(\{\mathbf{v}_1 \ \cdots \ \mathbf{v}_r\}\) is an orthonormal basis of \(\operatorname{Row}(A)\) and \(\{\mathbf{u}_1 \ \cdots \ \mathbf{u}_r\}\) is an orthonormal basis of \(\operatorname{Col}(A)\) and \(\sigma_1 \geq \sigma_2 \geq \cdots \geq \sigma_r \gt 0\). In this way we have expressed the matrix \(A\) as a linear combination of rank-one matrices (which are outer products of left and right singular vectors).

- Today we discussed Example 7 in Section 7.4 about reduced SVD and the Pseudoinverse (Moore-Penrose inverse).

-

Example 2. Here is a calculation of a singular value decomposition of the matrix

\[

A = \left[\!\begin{array}{rrr}

3 & -1 & 1 \\

-1 & 3 & 1 \\

1 & 1 & 1 \\

1 & 1 & 1 \end{array}\right].

\]

- (I) To find the singular values and right singular vectors we calculate the matrix \[ A^\top \!A = \left[\!\begin{array}{rrrr} 3 & -1 & 1 & 1 \\ -1 & 3 & 1 & 1 \\ 1 & 1 & 1 & 1 \end{array}\right] \left[\!\begin{array}{rrr} 3 & -1 & 1 \\ -1 & 3 & 1 \\ 1 & 1 & 1 \\ 1 & 1 & 1 \end{array}\right] = \left[\!\begin{array}{rrr} 12 & -4 & 4 \\ -4 & 12 & 4 \\ 4 & 4 & 4 \end{array}\right] = 4 \left[\!\begin{array}{rrr} 3 & -1 & 1 \\ -1 & 3 & 1 \\ 1 & 1 & 1 \end{array}\right]. \] Observe that adding the first two columns and subtracting twice the third column gives the zero vector. Hence $\lambda_3 = 0$ is an eigenvalue of $A^\top\!A$ and a corresponding eigenvector is $\bigl[ -1 \ -1 \ \ 2 \bigr]^\top$. Since each row of $A^\top\!A$ sums to $12$, $\lambda_2 = 12$ is an eigenvalue of $A^\top\!A$ and a corresponding eigenvector is $\bigl[ 1 \ \ 1 \ \ 1 \bigr]^\top$. Since the vector $\bigl[ 1 \ -1 \ \ 0 \bigr]^\top$ is orthogonal to both earlier found eigenvectors it also must be an eigenvector of $A^\top\!A$. The corresponding eigenvalue is $\lambda_1 = 16$. Thus the singular values of $A$ are $\sigma_1 = 4$ and $\sigma_2 = 2\sqrt{3}$, and the matrices $\Sigma$ and $V$ are as follows \[ \Sigma = \left[\!\begin{array}{rrr} 4 & 0 & 0 \\ 0 & 2\sqrt{3} & 0 \\ 0 & 0 & 0 \\ 0 & 0 & 0 \end{array}\right] \qquad V = \left[\!\begin{array}{rrr} \frac{1}{\sqrt{2}} & \frac{1}{\sqrt{3}} & -\frac{1}{\sqrt{6}} \\ -\frac{1}{\sqrt{2}} & \frac{1}{\sqrt{3}} & -\frac{1}{\sqrt{6}} \\ 0 & \frac{1}{\sqrt{3}} & \frac{2}{\sqrt{6}} \end{array}\right] = \bigl[ \mathbf{v}_1 \ \mathbf{v}_2 \ \mathbf{v}_3 \bigr]. \]

- (II) To find a $4\!\times\!4$ orthogonal matrix $U$ we first normalize vectors \[ A \left[\!\begin{array}{r} 1 \\ -1 \\ 0 \end{array}\right] = \left[\!\begin{array}{rrr} 3 & -1 & 1 \\ -1 & 3 & 1 \\ 1 & 1 & 1 \\ 1 & 1 & 1 \end{array}\right] \left[\!\begin{array}{r} 1 \\ -1 \\ 0 \end{array}\right] = \left[\!\begin{array}{r} 4 \\ -4 \\ 0 \\ 0 \end{array}\right] = 4 \left[\!\begin{array}{r} 1 \\ -1 \\ 0 \\ 0 \end{array}\right], \quad \text{hence} \quad \mathbf{u}_1 = \left[\!\begin{array}{r} \frac{1}{\sqrt{2}} \\ -\frac{1}{\sqrt{2}} \\ 0 \\ 0 \end{array}\right], \] and \[ A \left[\!\begin{array}{r} 1 \\ 1 \\ 1 \end{array}\right] = \left[\!\begin{array}{rrr} 3 & -1 & 1 \\ -1 & 3 & 1 \\ 1 & 1 & 1 \\ 1 & 1 & 1 \end{array}\right] \left[\!\begin{array}{r} 1 \\ 1 \\ 1 \end{array}\right] = \left[\!\begin{array}{r} 3 \\ 3 \\ 3 \\ 3 \end{array}\right] = 3 \left[\!\begin{array}{r} 1 \\ 1 \\ 1 \\ 1 \end{array}\right], \quad \text{hence} \quad \mathbf{u}_2 = \left[\!\begin{array}{r} \frac{1}{2} \\ \frac{1}{2} \\ \frac{1}{2} \\ \frac{1}{2} \end{array}\right]. \] From the general considerations about the singular value decomposition we know that the singular values and left and right singular vectors must satisfy: $A\mathbf{v}_1 = \sigma_1 \mathbf{u}_1$ and $A\mathbf{v}_2 = \sigma_2 \mathbf{u}_2$. Next we verify these equalities: \[ \left[\!\begin{array}{rrr} 3 & -1 & 1 \\ -1 & 3 & 1 \\ 1 & 1 & 1 \\ 1 & 1 & 1 \end{array}\right] \left[\!\begin{array}{r} \frac{1}{\sqrt{2}} \\ -\frac{1}{\sqrt{2}} \\ 0 \end{array}\right] = 4 \left[\!\begin{array}{r} \frac{1}{\sqrt{2}} \\ -\frac{1}{\sqrt{2}} \\ 0 \\ 0 \end{array}\right] \quad \text{and} \quad \left[\!\begin{array}{rrr} 3 & -1 & 1 \\ -1 & 3 & 1 \\ 1 & 1 & 1 \\ 1 & 1 & 1 \end{array}\right] \left[\!\begin{array}{r} \frac{1}{\sqrt{3}} \\ \frac{1}{\sqrt{3}} \\ \frac{1}{\sqrt{3}} \end{array}\right] = 2\sqrt{3} \left[\!\begin{array}{r} \frac{1}{2} \\ \frac{1}{2} \\ \frac{1}{2} \\ \frac{1}{2} \end{array}\right] \] It has been established in class that $\mathbf{u}_1$ and $\mathbf{u}_2$ form an orthonormal basis for $\operatorname{Col}A$.

- (III) To complete the matrix $U$ we need an orthonormal basis for $\mathbb{R}^4$. Since the space $\operatorname{Nul}\bigl(A^\top\bigr)$ is the orthogonal complement of $\operatorname{Col}A$, we can simply find the nullspace of $A^\top$, and then find two orhonormal vectors in $\operatorname{Nul}\bigl(A^\top\bigr).$ Here we go: \[ \textstyle \left[\!\begin{array}{rrrr} 3 & -1 & 1 & 1 \\ -1 & 3 & 1 & 1 \\ 1 & 1 & 1 & 1 \end{array}\right] \sim \left[\!\begin{array}{rrrr} 1 & 1 & 1 & 1 \\ 0 & 4 & 2 & 2 \\ 0 & -4 & -2 & -2 \end{array}\right] \sim \left[\!\begin{array}{rrrr} 1 & 1 & 1 & 1 \\ 0 & 1 & 1/2 & 1/2 \\ 0 & 0 & 0 & 0 \end{array}\right] \sim \left[\!\begin{array}{rrrr} 1 & 0 & 1/2 & 1/2 \\ 0 & 1 & 1/2 & 1/2 \\ 0 & 0 & 0 & 0 \end{array}\right] \] Thus, \[ \operatorname{Nul}\bigl(A^\top\bigr) = \left\{ s \left[\!\begin{array}{r} -1 \\ -1 \\ 0 \\ 2 \end{array}\right] + t \left[\!\begin{array}{r} -1 \\ -1 \\ 2 \\ 0 \end{array}\right] \ : \ s, t \in \mathbb{R} \right\}. \] All the vectors in $\operatorname{Nul}\bigl(A^\top\bigr)$ are orthogonal to $\mathbf{u}_1$ and $\mathbf{u}_2$ (verify this). There are many pairs of orthonormal vectors in $\operatorname{Nul}\bigl(A^\top\bigr).$ One pair that cough my attention is obtained with $s=1/2$, $t=1/2$ and $s=1/2$, $t=-1/2$ and then normalized. That is the pair \[ \mathbf{u}_3 = \left[\!\begin{array}{r} -\frac{1}{2} \\ - \frac{1}{2} \\ \frac{1}{2} \\ \frac{1}{2} \end{array}\right] \quad \text{and} \quad \mathbf{u}_4 = \left[\!\begin{array}{c} 0 \\ 0 \\ -\frac{1}{\sqrt{2}} \\ \frac{1}{\sqrt{2}} \end{array}\right] \] Finally, \[ U = \left[\!\begin{array}{rrrr} \frac{1}{\sqrt{2}} & \frac{1}{2} & -\frac{1}{2} & 0 \\ -\frac{1}{\sqrt{2}} & \frac{1}{2} & -\frac{1}{2} & 0 \\ 0 & \frac{1}{2} & \frac{1}{2} & -\frac{1}{\sqrt{2}} \\ 0 & \frac{1}{2} & \frac{1}{2} & \frac{1}{\sqrt{2}} \end{array}\right]. \]

- Remark To find vectors $\mathbf{u}_3$ and $ \mathbf{u}_4$ it might be slightly more efficient to proceed in the following way. Since we know that $\mathbf{u}_1$ and $ \mathbf{u}_2$ form a basis for $\operatorname{Col} A$ we can find a basis for $(\operatorname{Col} A)^{\perp}$ by solving the system \[ \left[\!\begin{array}{rrrr} 1 & -1 & 0 & 0 \\ 1 & 1 & 1 & 1 \end{array}\right] \left[\!\begin{array}{c} x_1 \\ x_2 \\ x_3 \\ x_4 \end{array}\right] = \left[\!\begin{array}{c} 0 \\ 0 \end{array}\right] \] The row reduction of the matrix \[ \left[\!\begin{array}{rrrr} 1 & -1 & 0 & 0 \\ 1 & 1 & 1 & 1 \end{array}\right] \sim \cdots \sim \left[\!\begin{array}{rrrr} 1 & 0 & 1/2 & 1/2 \\ 0 & 1 & 1/2 & 1/2 \end{array}\right] \] might be simpler than the row reduction that we did in (III).

- To celebrate our work we verify \[ \left[\!\begin{array}{rrr} 3 & -1 & 1 \\ -1 & 3 & 1 \\ 1 & 1 & 1 \\ 1 & 1 & 1 \end{array}\right] = \left[\!\begin{array}{rrrr} \frac{1}{\sqrt{2}} & \frac{1}{2} & -\frac{1}{2} & 0 \\ -\frac{1}{\sqrt{2}} & \frac{1}{2} & -\frac{1}{2} & 0 \\ 0 & \frac{1}{2} & \frac{1}{2} & -\frac{1}{\sqrt{2}} \\ 0 & \frac{1}{2} & \frac{1}{2} & \frac{1}{\sqrt{2}} \end{array}\right] \left[\!\begin{array}{rrr} 4 & 0 & 0 \\ 0 & 2\sqrt{3} & 0 \\ 0 & 0 & 0 \\ 0 & 0 & 0 \end{array}\right] \left[\!\begin{array}{rrr} \frac{1}{\sqrt{2}} & -\frac{1}{\sqrt{2}} & 0 \\ \frac{1}{\sqrt{3}} & \frac{1}{\sqrt{3}} & \frac{1}{\sqrt{3}} \\ -\frac{1}{\sqrt{6}} & -\frac{1}{\sqrt{6}} & \frac{2}{\sqrt{6}} \end{array}\right] . \]

- The reduced Singular Value Decomposition of $A$ is (we drop the vectors that belong to the nullspaces of $A$ and $A^\top$ and the zero rows and columns of $\Sigma$) \[ \left[\!\begin{array}{rrr} 3 & -1 & 1 \\ -1 & 3 & 1 \\ 1 & 1 & 1 \\ 1 & 1 & 1 \end{array}\right] = \left[\!\begin{array}{rr} \frac{1}{\sqrt{2}} & \frac{1}{2} \\ -\frac{1}{\sqrt{2}} & \frac{1}{2} \\ 0 & \frac{1}{2} \\ 0 & \frac{1}{2} \end{array}\right] \left[\!\begin{array}{rr} 4 & 0 \\ 0 & 2\sqrt{3} \end{array}\right] \left[\!\begin{array}{rrr} \frac{1}{\sqrt{2}} & -\frac{1}{\sqrt{2}} & 0 \\ \frac{1}{\sqrt{3}} & \frac{1}{\sqrt{3}} & \frac{1}{\sqrt{3}} \end{array}\right] . \]

- The pseudoinverse of $A$ is \begin{align*} A^+ & = \left[\!\begin{array}{rr} \frac{1}{\sqrt{2}} & \frac{1}{\sqrt{3}} \\ -\frac{1}{\sqrt{2}} & \frac{1}{\sqrt{3}} \\ 0 & \frac{1}{\sqrt{3}} \end{array}\right] \left[\!\begin{array}{cc} \frac{1}{4} & 0 \\ 0 & \frac{1}{2\sqrt{3}} \end{array}\right] \left[\!\begin{array}{rrrr} \frac{1}{\sqrt{2}} & -\frac{1}{\sqrt{2}} & 0 & 0 \\ \frac{1}{2} & \frac{1}{2} & \frac{1}{2} & \frac{1}{2} \end{array}\right] \\ & = \left[\!\begin{array}{rrrr} \frac{5}{24} & -\frac{1}{24} & \frac{1}{12} & \frac{1}{12} \\ -\frac{1}{24} & \frac{5}{24} & \frac{1}{12} & \frac{1}{12} \\ \frac{1}{12} & \frac{1}{12} & \frac{1}{12} & \frac{1}{12} \end{array}\right] \\ & = \frac{1}{24} \left[\!\begin{array}{rrrr} 5 & -1 & 2 & 2 \\ -1 & 5 & 2 & 2 \\ 2 & 2 & 2 & 2 \end{array}\right] \end{align*}

-

The following four properties make the pseudoinverse unique and special:

- The matrix $A^+A$ is symmetric.

- The matrix $AA^+$ is symmetric.

- $AA^+A = A$

- $A^+AA^+ = A^+$

- Suggested problems for Section 7.4: 3, 7, 11, 13, 14, 15, 17, 18, 19 (Notice a mistake in Practice Problem 2.)

- I post my notes on the Singular Value Decomposition that I wrote in GoodNotes on my iPad, trying to replicate what I did on the blackboard.

-

I believe that colors and pictures will help you internalize the process of the construction of the Singular Value Decomposition.

-

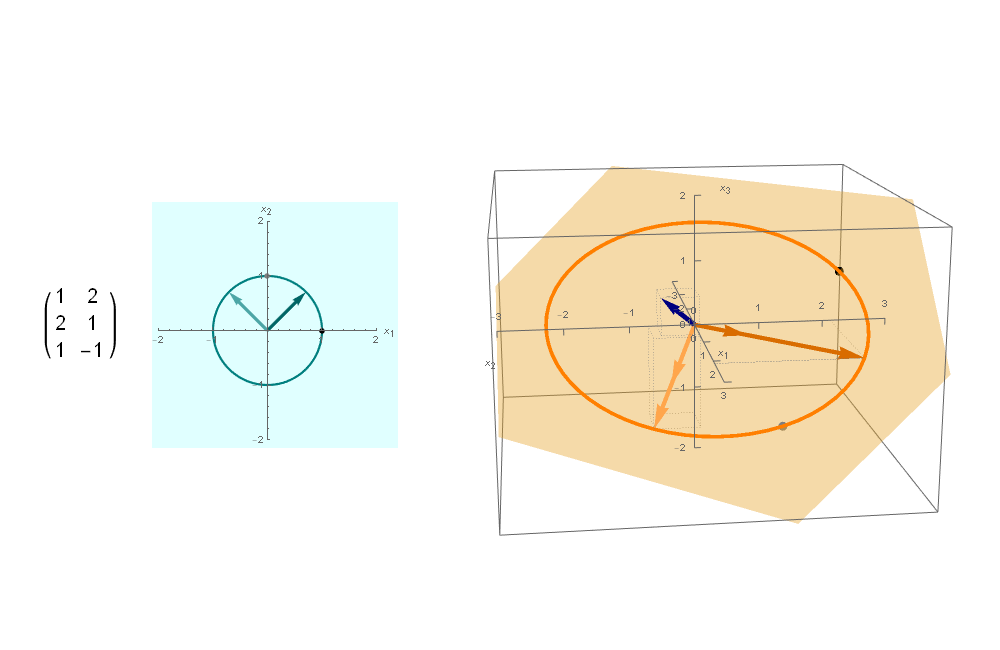

Example 1. As the first example consider $3\!\times\!2$ matrix

\[

A = \left[\begin{array}{rr}

1 & 2 \\

2 & 1 \\

1 & -1

\end{array}\right].

\]

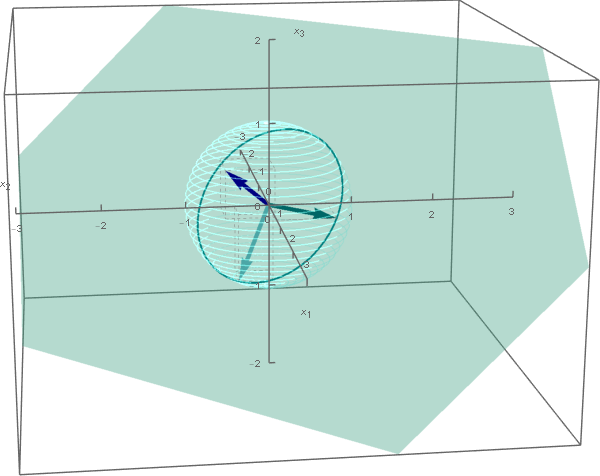

- To find a Singular Value Decomposition of the $3\!\times 2$ matrix $A$ given above, we are looking for a $3\!\times\!3$ orthogonal matrix $U$, the $3\!\times\!2$ matrix $\Sigma$ with the singular values of $A$ on the "diagonal", and a $2\!\times\!2$ orthogonal matrix $V$, such that $A = U\Sigma V^\top$.

- (I) To find the singular values and right singular vectors of $A$ we calculate the symmetric matrix \[ A^\top A = \left[\begin{array}{rrr} 1 & 2 & 1 \\ 2 & 1 & -1 \end{array}\right] \left[\begin{array}{rr} 1 & 2 \\ 2 & 1 \\ 1 & -1 \end{array}\right] = \left[\begin{array}{rr}6 & 3 \\ 3 & 6 \end{array}\right]. \] The eigenvalues of this matrix $A^\top A$ in nonincreasing order are $9$ and $3.$ The orthogonal diagonalization of the matrix $A^\top A$ is \[ A^\top A = \left[\begin{array}{rr}6 & 3 \\ 3 & 6 \end{array}\right] = \left[\begin{array}{rr} \frac{1}{\sqrt{2}} & -\frac{1}{\sqrt{2}} \\ \frac{1}{\sqrt{2}} &\frac{1}{\sqrt{2}} \end{array}\right] \left[\!\begin{array}{cc} 9 & 0\\ 0 & 3 \end{array}\right] \left[\begin{array}{rr} \frac{1}{\sqrt{2}} & \frac{1}{\sqrt{2}} \\ - \frac{1}{\sqrt{2}} &\frac{1}{\sqrt{2}} \end{array}\right]. \] Here we found the matrix $V$ in the singular value decomposition \[ V = \left[\begin{array}{rr} \frac{1}{\sqrt{2}} & -\frac{1}{\sqrt{2}} \\ \frac{1}{\sqrt{2}} &\frac{1}{\sqrt{2}} \end{array}\right]. \] The singular values of $A$ are $3$ and $\sqrt{3}.$ The matrix $\Sigma$ is \[ \Sigma = \left[\!\begin{array}{cc} 3 & 0\\ 0 & \sqrt{3} \\ 0 & 0 \end{array}\right] \]

- (II) To find a $3\!\times\!3$ orthogonal matrix $U$, we rewrite the equality $A = U \Sigma^\top V^\top$ as \[ A V = U \Sigma. \] It is easier to see what is going on in the above equality if we write \[ V = \bigl[ \mathbf{v}_1 \ \mathbf{v}_2 \bigr], \quad U = \bigl[ \mathbf{u}_1 \ \mathbf{u}_2 \ \mathbf{u}_3 \bigr], \quad \Sigma = \left[\!\begin{array}{cc} \sigma_1 & 0\\ 0 & \sigma_2 \\ 0 & 0 \end{array}\right] \] Thus, \[ A V = \bigl[ A \mathbf{v}_1 \ A \mathbf{v}_2 \bigr] \] and \[ U \Sigma = \bigl[ \mathbf{u}_1 \ \mathbf{u}_2 \ \mathbf{u}_3 \bigr] \left[\!\begin{array}{cc} \sigma_1 & 0\\ 0 & \sigma_2 \\ 0 & 0 \end{array}\right] = \bigl[ \sigma_1 \mathbf{u}_1 \ \sigma_2 \mathbf{u}_2 \bigr]. \] Hence \[ A \mathbf{v}_1 = \sigma_1 \mathbf{u}_1, \qquad A \mathbf{v}_2 = \sigma_2 \mathbf{u}_2. \] Therefore, \[ \mathbf{u}_1 = \frac{1}{\sigma_1} A \mathbf{v}_1 = \frac{1}{3} \left[\begin{array}{rr} 1 & 2 \\ 2 & 1 \\ 1 & -1 \end{array}\right] \left[\begin{array}{c} \frac{1}{\sqrt{2}} \\ \frac{1}{\sqrt{2}} \end{array}\right] = \left[\begin{array}{c} \frac{1}{\sqrt{2}} \\ \frac{1}{\sqrt{2}} \\ 0 \end{array}\right] \] and \[ \mathbf{u}_2 = \frac{1}{\sigma_2} A \mathbf{v}_2 = \frac{1}{\sqrt{3}} \left[\begin{array}{rr} 1 & 2 \\ 2 & 1 \\ 1 & -1 \end{array}\right] \left[\begin{array}{c} -\frac{1}{\sqrt{2}} \\ \frac{1}{\sqrt{2}} \end{array}\right] = \left[\begin{array}{c} \frac{1}{\sqrt{6}} \\ -\frac{1}{\sqrt{6}} \\ -\frac{2}{\sqrt{6}} \end{array}\right]. \] Thus, we have the first two columns of $U$. Those two columns form a basis for the column space of $A$.

- (III) The next step is to find the remaining column of $U.$ Since the first two columns of $U$ form an orthonormal basis for $\operatorname{Col}(A)$, the remaining column of $U$ will be a unit vector in $\operatorname{Nul}(A^\top).$ That vector is \[ \mathbf{u}_3 = \left[\!\begin{array}{r} \frac{1}{\sqrt{3}} \\ -\frac{1}{\sqrt{3}} \\ \frac{1}{\sqrt{3}} \end{array}\right]. \] Finally we have the complete $3\!\times\!3$ matrix $U$ \[ U = \left[\!\begin{array}{ccc} \frac{1}{\sqrt{2}} &\frac{1}{\sqrt{6}} & \frac{1}{\sqrt{3}} \\ \frac{1}{\sqrt{2}} & - \frac{1}{\sqrt{6}} & - \frac{1}{\sqrt{3}} \\ 0 & -\frac{2}{\sqrt{6}} & \frac{1}{\sqrt{3}} \end{array}\right]. \]

- To celebrate our work we verify \[ A = \left[\begin{array}{rr} 1 & 2 \\ 2 & 1 \\ 1 & -1 \end{array}\right] = \left[\!\begin{array}{ccc} \frac{1}{\sqrt{2}} &\frac{1}{\sqrt{6}} & \frac{1}{\sqrt{3}} \\ \frac{1}{\sqrt{2}} & - \frac{1}{\sqrt{6}} & - \frac{1}{\sqrt{3}} \\ 0 & -\frac{2}{\sqrt{6}} & \frac{1}{\sqrt{3}} \end{array}\right] \left[\!\begin{array}{cc} 3 & 0\\ 0 & \sqrt{3} \\ 0 & 0 \end{array}\right] \left[\begin{array}{rr} \frac{1}{\sqrt{2}} & \frac{1}{\sqrt{2}} \\ -\frac{1}{\sqrt{2}} &\frac{1}{\sqrt{2}} \end{array}\right]. \] Or, equivalently, what is easier $AV = U\Sigma$: \[ \left[\begin{array}{rr} 1 & 2 \\ 2 & 1 \\ 1 & -1 \end{array}\right] \left[\begin{array}{rr} \frac{1}{\sqrt{2}} & -\frac{1}{\sqrt{2}} \\ \frac{1}{\sqrt{2}} &\frac{1}{\sqrt{2}} \end{array}\right] = \left[\!\begin{array}{ccc} \frac{1}{\sqrt{2}} &\frac{1}{\sqrt{6}} & \frac{1}{\sqrt{3}} \\ \frac{1}{\sqrt{2}} & - \frac{1}{\sqrt{6}} & - \frac{1}{\sqrt{3}} \\ 0 & -\frac{2}{\sqrt{6}} & \frac{1}{\sqrt{3}} \end{array}\right] \left[\!\begin{array}{cc} 3 & 0\\ 0 & \sqrt{3} \\ 0 & 0 \end{array}\right]. \]

-

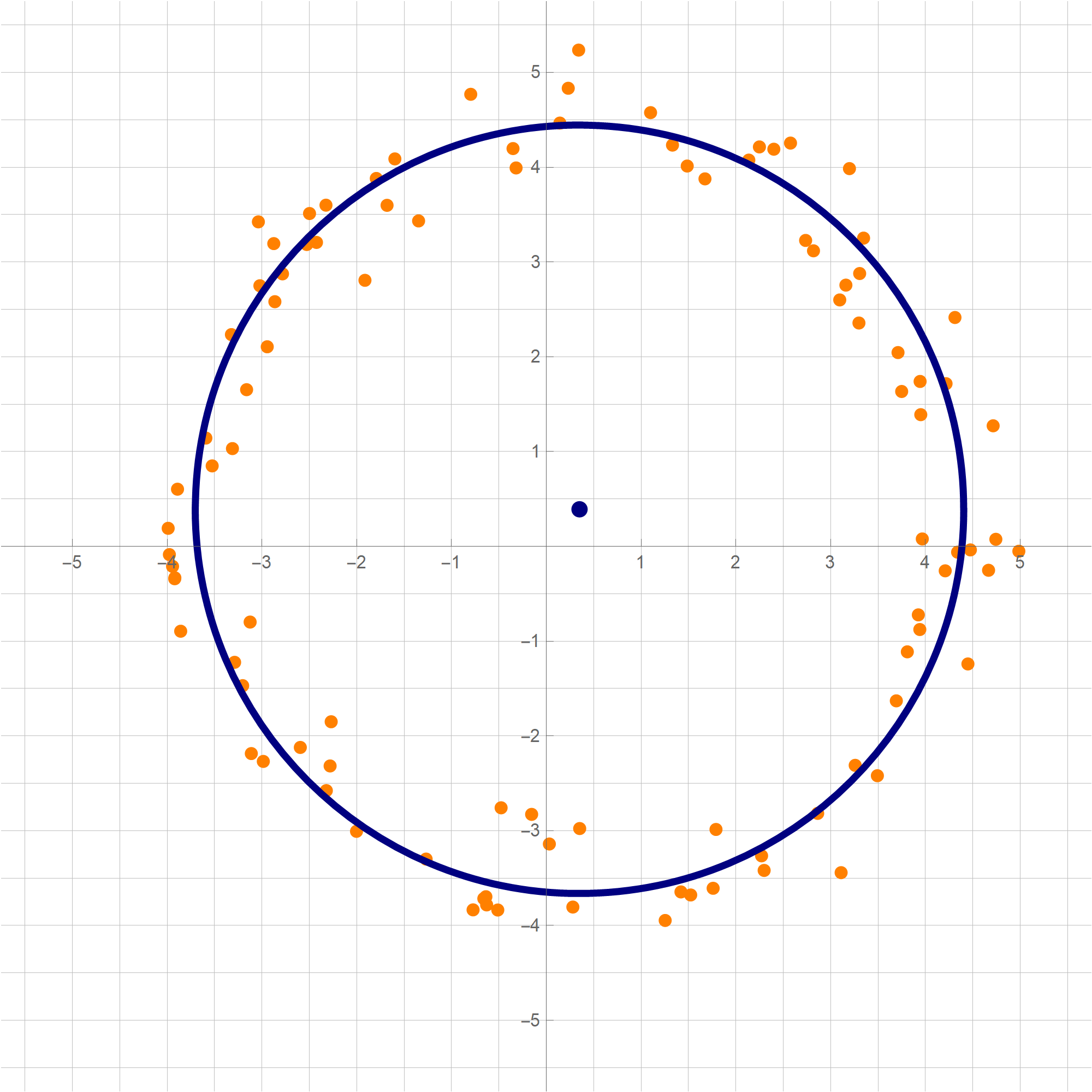

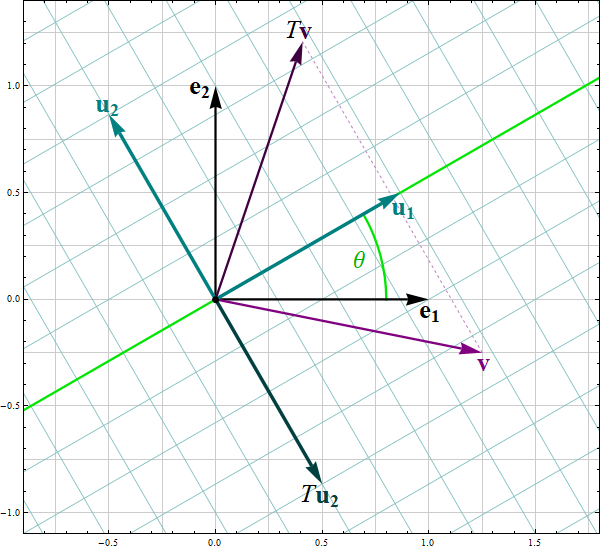

In this case, since the $A$ maps vectors in $\mathbb{R}^2$ to vectors in $\mathbb{R}^3$, we can represent the geometric significance of the singular values and the singular vectors in a picture.

The narrative is missing for the above picture. Also, I want to make the picture of the action of $A^\top$. I already made the left-hand side picture:

The right-hand side picture will follow soon.

- Today I post explorations of three quadratic forms on \(\mathbb{R}^3\).

-

Example 3.

In this item we consider the quadratic form

\[

Q(x_1, x_2, x_3) = x_1^2 - 4 x_1 x_2 +4 x_2 x_3 - x_3^2 \quad \text{where} \quad x_1, x_2 \in \mathbb{R}.

\]

- T1 We have \[ Q(x_1, x_2, x_3) = \bigl[ x_1 \ \ x_2 \ \ x_3 \bigr] \left[\! \begin{array}{ccc} 1 & -2 & 0 \\ -2 & 0 & 2 \\ 0 & 2 & -1 \end{array} \!\right] \left[\! \begin{array}{c} x_1 \\ x_2 \\ x_3 \end{array} \!\right] = x_1^2 - 4 x_1 x_2 +4 x_2 x_3 - x_3^2 \quad \text{where} \quad x_1, x_2, x_3 \in \mathbb{R}. \]

- T2

Clearly the quadratic form $Q$ is not a zero form. To classify $Q$ as positive semidefinite, negative semidefinite, indefinite we orthogonally diagonalize the matrix of this quadratic form:

\[

\left[\!

\begin{array}{ccc}

1 & -2 & 0 \\

-2 & 0 & 2 \\

0 & 2 & -1

\end{array}

\!\right] = \left[\!\begin{array}{ccc}

-\frac{1}{3} & -\frac{2}{3} & \frac{2}{3} \\

-\frac{2}{3} & \frac{2}{3} & \frac{1}{3} \\

\frac{2}{3} & \frac{1}{3} & \frac{2}{3} \\

\end{array}

\!\right] \left[\!

\begin{array}{ccc}

-3 & 0 & 0 \\

0 & 3 & 0 \\

0 & 0 & 0

\end{array}

\!\right] \left[\!\begin{array}{ccc}

-\frac{1}{3} & -\frac{2}{3} & \frac{2}{3} \\

-\frac{2}{3} & \frac{2}{3} & \frac{1}{3} \\

\frac{2}{3} & \frac{1}{3} & \frac{2}{3} \\

\end{array}

\!\right]^\top

\]

Let us introduce two bases

\[

\mathcal{B} = \left\{ \left[\!

\begin{array}{c}

-\frac{1}{3} \\ -\frac{2}{3} \\ \frac{2}{3}

\end{array}

\!\right], \left[\!

\begin{array}{c}

-\frac{2}{3} \\ \frac{2}{3} \\ \frac{1}{3}

\end{array}

\!\right], \left[\!

\begin{array}{c}

\frac{2}{3} \\ \frac{1}{3} \\ \frac{2}{3}

\end{array}

\!\right] \right\} \qquad \text{and} \qquad

\mathcal{E} = \left\{ \left[\!

\begin{array}{c} 1 \\ 0 \\ 0 \end{array}

\!\right], \left[\!

\begin{array}{c}

0 \\ 1 \\ 0

\end{array}

\!\right], \left[\!

\begin{array}{c}

0 \\ 0 \\ 1

\end{array}

\!\right] \right\}.

\]

The above orthogonal diagonalization suggests a very useful change of coordinates:

\[

\mathbf{y} = \left[\!\begin{array}{ccc}

-\frac{1}{3} & -\frac{2}{3} & \frac{2}{3} \\

-\frac{2}{3} & \frac{2}{3} & \frac{1}{3} \\

\frac{2}{3} & \frac{1}{3} & \frac{2}{3} \\

\end{array}

\!\right]^\top \mathbf{x} = \underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x}, \qquad

\mathbf{x} = \left[\!\begin{array}{ccc}

-\frac{1}{3} & -\frac{2}{3} & \frac{2}{3} \\

-\frac{2}{3} & \frac{2}{3} & \frac{1}{3} \\

\frac{2}{3} & \frac{1}{3} & \frac{2}{3} \\

\end{array}

\!\right] \mathbf{y} = \underset{\mathcal{E}\leftarrow\mathcal{B}}{P} \mathbf{y}.

\]

The coordinates $\mathbf{y}$ are the coordinates relative to the basis $\mathcal{B}$.

With the change of coordinates \[ \mathbf{y} = \underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x}, \qquad \mathbf{x} = \underset{\mathcal{E}\leftarrow\mathcal{B}}{P} \mathbf{y}, \] the quadratic form $Q$ simplifies as follows \[ x_1^2 - 4 x_1 x_2 +4 x_2 x_3 - x_3^2 = - 3 y_1^2 + 3 y_2^2. \] Clearly the form $- 3 y_1^2 + 3 y_2^2$ is an indefinite form taking the value $-3$ at $(y_1,y_2,y_3) = (1,0,0)$ and the value $3$ at $(y_1,y_2,y_3) = (0,1,0).$ - T4

The above introduced change of coordinates yields

\[

\bigl\{ \mathbf{x} \in \mathbb{R}^3 \, : \, Q(\mathbf{x}) = -1 \bigr\} = \bigl\{ \mathbf{y} \in \mathbb{R}^3 \, : \, - 3 y_1^2 + 3 y_2^2 = -1 \bigr\},

\]

\[

\bigl\{ \mathbf{x} \in \mathbb{R}^3 \, : \, Q(\mathbf{x}) = 0 \bigr\} = \bigl\{ \mathbf{y} \in \mathbb{R}^3 \, : \, - 3 y_1^2 + 3 y_2^2 = 0 \bigr\},

\]

and

\[

\bigl\{ \mathbf{x} \in \mathbb{R}^3 \, : \, Q(\mathbf{x}) = 1 \bigr\} = \bigl\{ \mathbf{y} \in \mathbb{R}^3 \, : \, - 3 y_1^2 + 3 y_2^2 = 1 \bigr\}.

\]

The set

\[

\bigl\{ \mathbf{x} \in \mathbb{R}^3 \, : \, Q(\mathbf{x}) = 0 \bigr\} = \bigl\{ \mathbf{y} \in \mathbb{R}^3 \, : \, - 3 y_1^2 + 3 y_2^2 = 0 \bigr\}

\]

is a union of two planes. These planes are represented by the following two spans:

\[

\operatorname{Span} \left\{

\left[\!

\begin{array}{c}

1 \\ 1 \\ 0

\end{array}

\!\right],

\left[\!

\begin{array}{c}

0 \\ 0 \\ 1

\end{array}

\!\right]

\right\} \qquad \text{and} \qquad \operatorname{Span} \left\{

\left[\!

\begin{array}{c}

1 \\ -1 \\ 0

\end{array}

\!\right],

\left[\!

\begin{array}{c}

0 \\ 0 \\ 1

\end{array}

\!\right]

\right\}

\]

in the coordinates relative to the basis $\mathcal{B}$. To get the spans of the vertices in the original coordinate system relative to the basis $\mathcal{E}$ we apply the change of coordinates matrix $\displaystyle \underset{\mathcal{E}\leftarrow\mathcal{B}}{P}$:

\[

\operatorname{Span} \left\{

\left[\!

\begin{array}{c}

-1 \\ 0 \\ 1

\end{array}

\!\right],

\left[\!

\begin{array}{c}

2 \\ 1 \\ 2

\end{array}

\!\right]

\right\} \qquad \text{and} \qquad \operatorname{Span} \left\{

\left[\!

\begin{array}{c}

1 \\ -4 \\ 1

\end{array}

\!\right],

\left[\!

\begin{array}{c}

2 \\ 1 \\ 2

\end{array}

\!\right]

\right\}

\]

The set

\[

\bigl\{ \mathbf{x} \in \mathbb{R}^3 \, : \, Q(\mathbf{x}) = 1 \bigr\} = \bigl\{ \mathbf{y} \in \mathbb{R}^3 \, : \, - 3 y_1^2 + 3 y_2^2 = 1 \bigr\}

\]

is a hyperbolic cylinder. The equation $- 3 y_1^2 + 3 y_2^2 = 1$ represents a hyperbola in the plane spanned by the vectors

\[

\left[\!

\begin{array}{c}

-\frac{1}{3} \\ -\frac{2}{3} \\ \frac{2}{3}

\end{array}

\!\right] \quad \text{and} \quad \left[\!

\begin{array}{c}

-\frac{2}{3} \\ \frac{2}{3} \\ \frac{1}{3}

\end{array}

\!\right] \quad \text{coordinates relative to} \quad \mathcal{E}.

\]

The cylinder is formed by the parallel lines that go through the points on the hyperbola and are orthogonal to the plane spanned by the above two vectors.

The set

\[

\bigl\{ \mathbf{x} \in \mathbb{R}^3 \, : \, Q(\mathbf{x}) = -1 \bigr\} = \bigl\{ \mathbf{y} \in \mathbb{R}^3 \, : \, - 3 y_1^2 + 3 y_2^2 = -1 \bigr\}

\]

is a hyperbolic cylinder. The equation $- 3 y_1^2 + 3 y_2^2 = -11$ represents a hyperbola in the plane spanned by the vectors

\[

\left[\!

\begin{array}{c}

-\frac{1}{3} \\ -\frac{2}{3} \\ \frac{2}{3}

\end{array}

\!\right] \quad \text{and} \quad \left[\!

\begin{array}{c}

-\frac{2}{3} \\ \frac{2}{3} \\ \frac{1}{3}

\end{array}

\!\right] \quad \text{coordinates relative to} \quad \mathcal{E}.

\]

The cylinder is formed by the parallel lines that go through the points on the hyperbola and are orthogonal to the plane spanned by the above two vectors.

The set

\[

\bigl\{ \mathbf{x} \in \mathbb{R}^3 \, : \, Q(\mathbf{x}) = -1 \bigr\} = \bigl\{ \mathbf{y} \in \mathbb{R}^3 \, : \, - 3 y_1^2 + 3 y_2^2 = -1 \bigr\}

\]

is a hyperbolic cylinder. The equation $- 3 y_1^2 + 3 y_2^2 = -11$ represents a hyperbola in the plane spanned by the vectors

\[

\left[\!

\begin{array}{c}

-\frac{1}{3} \\ -\frac{2}{3} \\ \frac{2}{3}

\end{array}

\!\right] \quad \text{and} \quad \left[\!

\begin{array}{c}

-\frac{2}{3} \\ \frac{2}{3} \\ \frac{1}{3}

\end{array}

\!\right] \quad \text{coordinates relative to} \quad \mathcal{E}.

\]

The cylinder is formed by the parallel lines that go through the points on the hyperbola and are orthogonal to the plane spanned by the above two vectors.

- T3 Since the change of coordinate matrices $\displaystyle \underset{\mathcal{E}\leftarrow\mathcal{B}}{P}$ and $\displaystyle \underset{\mathcal{B}\leftarrow\mathcal{E}}{P}$ are orthogonal we have \[ \| \mathbf{y} \|^2 = \mathbf{y}^\top \mathbf{y} = \left(\underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x}\right)^\top \left(\underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x}\right) = \mathbf{x}^\top \left(\underset{\mathcal{B}\leftarrow\mathcal{E}}{P}\right)^\top \underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x} = \mathbf{x}^\top \mathbf{x} = \|\mathbf{x} \|^2. \] Therefore \begin{align*} S & = \Bigl\{ Q(\mathbf{x}) \, : \, \mathbf{x} \in \mathbb{R}^3, \ \| \mathbf{x} \| = 1 \Bigr\} \\ & = \Bigl\{ -3 y_1^2 + 3 y_2^2 \, : \, y_1^2 + y_2^2 + y_3^2 = 1, \ y_1, y_2, y_3 \in\mathbb{R} \Bigr\}. \end{align*} Assume whenever $y_1^2 + y_2^2 + y_3^2 = 1$. Then \[ -3 = -3 y_1^2 - 3 y_2^2 - 3 y_3^2 \leq -3 y_1^2 + 3 y_2^2 \leq 3 y_1^2 + 3 y_2^2 + 3 y_3^2 = 3. \] Therefore, we have that $\min S = -3$ and $\max S = 3$. The form $-3 y_1^2 + 3 y_2^2$ takes the value $-3$ when $y_1 = 1, y_2 = 0, y_3 = 0$, or $y_1 = -1, y_2 =0, y_3 = 0$, and the value $3$ when $y_1 = 0, y_2 = 1, y_3 = 0$ or $y_1 = 0, y_2 =-1, y_3 = 0$. Using the change of coordinates matrix $\displaystyle \underset{\mathcal{E}\leftarrow\mathcal{B}}{P}$ we conclude that \[ \bigl\{ \mathbf{x} \in \mathbb{R}^3 \, : \, Q(\mathbf{x}) = -3 \bigr\} = \left\{ \left[\! \begin{array}{c} -\frac{1}{3} \\ -\frac{2}{3} \\ \frac{2}{3} \end{array} \!\right], -\left[\! \begin{array}{c} -\frac{1}{3} \\ -\frac{2}{3} \\ \frac{2}{3} \end{array} \!\right] \right\}. \] and \[ \bigl\{ \mathbf{x} \in \mathbb{R}^3 \, : \, Q(\mathbf{x}) = 3 \bigr\} = \left\{ \left[\! \begin{array}{c} -\frac{2}{3} \\ \frac{2}{3} \\ \frac{1}{3} \end{array} \!\right], - \left[\! \begin{array}{c} -\frac{2}{3} \\ \frac{2}{3} \\ \frac{1}{3} \end{array} \!\right] \right\}. \]

-

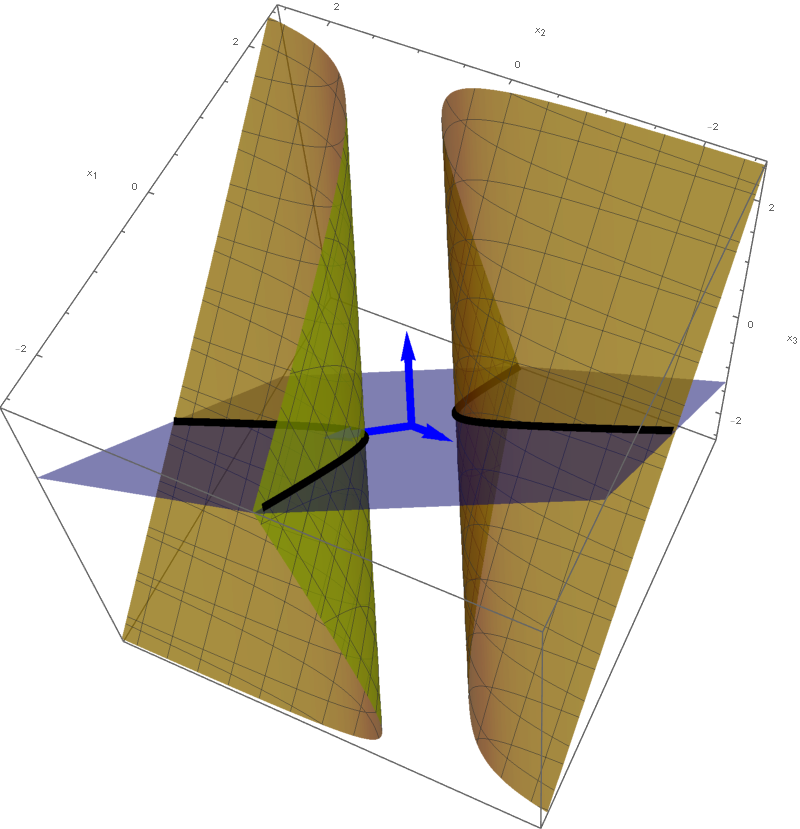

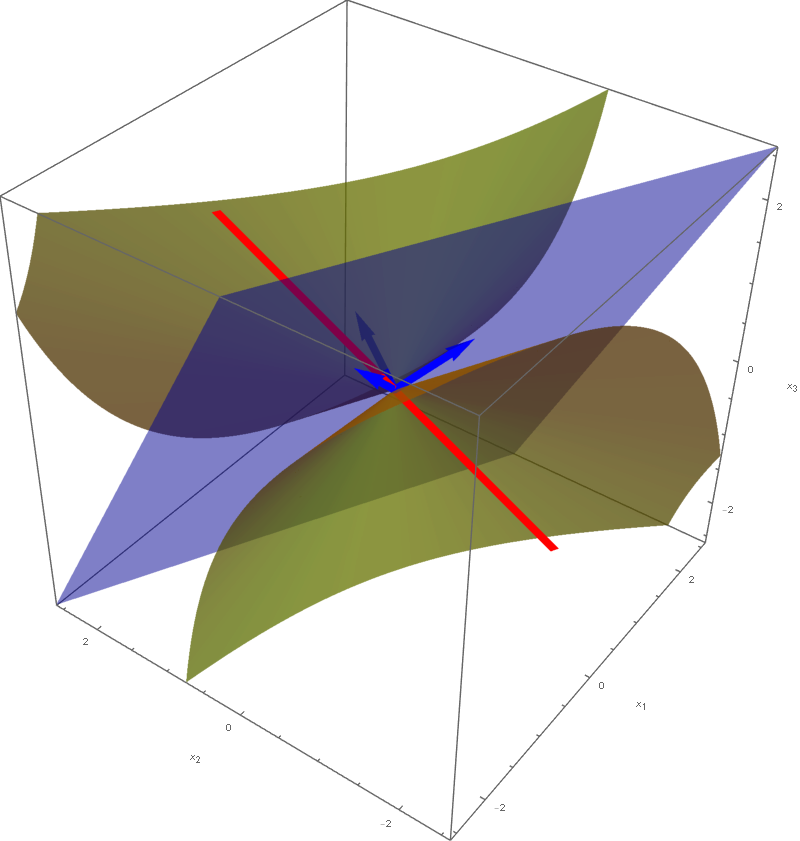

Example 4.

In this item we consider the quadratic form

\[

Q(x_1, x_2, x_3) = 4 x_1 x_2 +2 x_1 x_3 + 3 x_2^2+4 x_2 x_3 \quad \text{where} \quad x_1, x_2 \in \mathbb{R}.

\]

- T1 We have \begin{align*} Q(x_1, x_2, x_3) = \bigl[ x_1 \ \ x_2 \ \ x_3 \bigr] \left[\! \begin{array}{ccc} 0 & 2 & 1 \\ 2 & 3 & 2 \\ 1 & 2 & 0 \\ \end{array} \!\right] \left[\! \begin{array}{c} x_1 \\ x_2 \\ x_3 \end{array} \!\right] & = 4 x_1 x_2 +2 x_1 x_3 + 3 x_2^2+4 x_2 x_3 \\ & \qquad \text{where} \quad x_1, x_2, x_3 \in \mathbb{R}. \end{align*} The symmetric matrix $\displaystyle A = \left[\! \begin{array}{ccc} 0 & 2 & 1 \\ 2 & 3 & 2 \\ 1 & 2 & 0 \\ \end{array}\!\right]$ is said to be associated with the quadratic form $Q$. In the next item we will orthogonally diagonalize the matrix $A$. Finding an orthonormal basis $\{\mathbf{u}_1, \mathbf{u}_2, \mathbf{u}_3 \}$ of \(\mathbb{R}^3\) that consists of unit eigenvectors of \(A\) and the corresponding eigenvalues \(\lambda_1, \lambda_2, \lambda_3\) will be a significant help in understanding the quadratic form \(Q\).

- T2 Clearly the quadratic form $Q$ is not a zero form. To classify $Q$ as positive semidefinite, negative semidefinite, indefinite we orthogonally diagonalize the matrix $A$ that is associated with this quadratic form: \[ \left[\! \begin{array}{ccc} 0 & 2 & 1 \\ 2 & 3 & 2 \\ 1 & 2 & 0 \\ \end{array} \!\right] = \left[\!\begin{array}{ccc} -\frac{1}{\sqrt{2}} & \frac{1}{\sqrt{3}} & \frac{1}{\sqrt{6}} \\ 0 & -\frac{1}{\sqrt{3}} & \frac{2}{\sqrt{6}} \\ \frac{1}{\sqrt{2}} & \frac{1}{\sqrt{3}} & \frac{1}{\sqrt{6}} \\ \end{array} \!\right] \left[\! \begin{array}{ccc} -1 & 0 & 0 \\ 0 & -1 & 0 \\ 0 & 0 & 5 \end{array} \!\right] \left[\!\begin{array}{ccc} -\frac{1}{\sqrt{2}} & \frac{1}{\sqrt{3}} & \frac{1}{\sqrt{6}} \\ 0 & -\frac{1}{\sqrt{3}} & \frac{2}{\sqrt{6}} \\ \frac{1}{\sqrt{2}} & \frac{1}{\sqrt{3}} & \frac{1}{\sqrt{6}} \\ \end{array} \!\right]^\top. \] Here \[ \mathbf{u}_1 = \left[\!\begin{array}{c} -\frac{1}{\sqrt{2}} \\ 0 \\ \frac{1}{\sqrt{2}}\end{array}\!\right], \quad \mathbf{u}_2 = \left[\!\begin{array}{c}\frac{1}{\sqrt{3}} \\ -\frac{1}{\sqrt{3}} \\ \frac{1}{\sqrt{3}}\end{array}\!\right], \quad \mathbf{u}_3 = \left[\! \begin{array}{c} \frac{1}{\sqrt{6}} \\ \frac{2}{\sqrt{6}} \\ \frac{1}{\sqrt{6}} \end{array}\!\right], \] are normalized eigenvectors of\(A\). The corresponding eigenvalues, respectively, are $\lambda_1 = -1$, \(\lambda_2 = -1\), and \(\lambda_3 = 5\). Let us introduce two bases of \(\mathbb{R}^3\), the basis \(\mathcal{B}= \{\mathbf{u}_1, \mathbf{u}_2, \mathbf{u}_3 \}\) which consists of the normalized eigenvectors of \(A\) and the standard basis \(\mathcal{E} = \{\mathbf{i}, \mathbf{j}, \mathbf{k}\}\). (Here I use the notation \(\mathbf{i}, \mathbf{j}, \mathbf{k}\) for the standard coordinate vectors in \(\mathbb{R}^3\). This notation is common in multivariable calculus courses.) That is, \[ \mathcal{B} = \left\{ \left[\! \begin{array}{c} -\frac{1}{\sqrt{2}} \\ 0 \\ \frac{1}{\sqrt{2}} \end{array} \!\right], \left[\! \begin{array}{c} \frac{1}{\sqrt{3}} \\ -\frac{1}{\sqrt{3}} \\ \frac{1}{\sqrt{3}} \end{array} \!\right], \left[\! \begin{array}{c} \frac{1}{\sqrt{6}} \\ \frac{2}{\sqrt{6}} \\ \frac{1}{\sqrt{6}} \end{array} \!\right] \right\} \quad \text{and} \quad \mathcal{E} = \left\{ \left[\! \begin{array}{c} 1 \\ 0 \\ 0 \end{array} \!\right], \left[\! \begin{array}{c} 0 \\ 1 \\ 0 \end{array} \!\right], \left[\! \begin{array}{c} 0 \\ 0 \\ 1 \end{array} \!\right] \right\}. \] The above orthogonal diagonalization suggests the following very useful change of coordinates: \begin{align*} \mathbf{y} & = \underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x} = \left[\!\begin{array}{ccc} -\frac{1}{\sqrt{2}} & \frac{1}{\sqrt{3}} & \frac{1}{\sqrt{6}} \\ 0 & -\frac{1}{\sqrt{3}} & \frac{2}{\sqrt{6}} \\ \frac{1}{\sqrt{2}} & \frac{1}{\sqrt{3}} & \frac{1}{\sqrt{6}} \end{array} \!\right]^\top \mathbf{x} = \left[\!\begin{array}{ccc} -\frac{1}{\sqrt{2}} & 0 & \frac{1}{\sqrt{2}} \\ \frac{1}{\sqrt{3}} & -\frac{1}{\sqrt{3}} & \frac{1}{\sqrt{3}} \\ \frac{1}{\sqrt{6}} & \frac{2}{\sqrt{6}} & \frac{1}{\sqrt{6}} \\ \end{array} \!\right] \mathbf{x}, \\ \mathbf{x} & = \underset{\mathcal{E}\leftarrow\mathcal{B}}{P} \mathbf{y} = \left[\!\begin{array}{ccc} -\frac{1}{\sqrt{2}} & \frac{1}{\sqrt{3}} & \frac{1}{\sqrt{6}} \\ 0 & -\frac{1}{\sqrt{3}} & \frac{2}{\sqrt{6}} \\ \frac{1}{\sqrt{2}} & \frac{1}{\sqrt{3}} & \frac{1}{\sqrt{6}} \\ \end{array} \!\right] \mathbf{y} . \end{align*} The coordinates $\mathbf{y}$ are the coordinates of $\mathbf{x}$ relative to the basis $\mathcal{B}$, that is $\mathbf{y} = \bigl[\mathbf{x}\bigr]_{\mathcal{B}}.$ With the change of coordinates \[ \mathbf{y} = \underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x}, \qquad \mathbf{x} = \underset{\mathcal{E}\leftarrow\mathcal{B}}{P} \mathbf{y}, \] the quadratic form $Q$ simplifies as follows \begin{align*} 4 x_1 x_2 +2 x_1 x_3 + 3 x_2^2+4 x_2 x_3 & = \bigl[ x_1 \ \ x_2 \ \ x_3 \bigr] \left[\! \begin{array}{ccc} 0 & 2 & 1 \\ 2 & 3 & 2 \\ 1 & 2 & 0 \\ \end{array} \!\right] \left[\! \begin{array}{c} x_1 \\ x_2 \\ x_3 \end{array} \!\right]\\[5pt] \boxed{\text{orthogonal diagonalization}} & = \mathbf{x}^\top \left[\!\begin{array}{ccc} -\frac{1}{\sqrt{2}} & \frac{1}{\sqrt{3}} & \frac{1}{\sqrt{6}} \\ 0 & -\frac{1}{\sqrt{3}} & \frac{2}{\sqrt{6}} \\ \frac{1}{\sqrt{2}} & \frac{1}{\sqrt{3}} & \frac{1}{\sqrt{6}} \\ \end{array} \!\right] \left[\! \begin{array}{ccc} -1 & 0 & 0 \\ 0 & -1 & 0 \\ 0 & 0 & 5 \end{array} \!\right] \left[\!\begin{array}{ccc} -\frac{1}{\sqrt{2}} & \frac{1}{\sqrt{3}} & \frac{1}{\sqrt{6}} \\ 0 & -\frac{1}{\sqrt{3}} & \frac{2}{\sqrt{6}} \\ \frac{1}{\sqrt{2}} & \frac{1}{\sqrt{3}} & \frac{1}{\sqrt{6}} \\ \end{array} \!\right]^\top \mathbf{x} \\[5pt] \boxed{\text{definitions of $\displaystyle \underset{\mathcal{B}\leftarrow\mathcal{E}}{P}$ and $\displaystyle \underset{\mathcal{E}\leftarrow\mathcal{B}}{P}$}} & = \mathbf{x}^\top \underset{\mathcal{E}\leftarrow\mathcal{B}}{P} \left[\! \begin{array}{ccc} -1 & 0 & 0 \\ 0 & -1 & 0 \\ 0 & 0 & 5 \end{array} \!\right] \underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x} \\[5pt] \boxed{\text{use the fact $\displaystyle \Bigl( \displaystyle \underset{\mathcal{B}\leftarrow\mathcal{E}}{P}\Bigr)^\top = \underset{\mathcal{E}\leftarrow\mathcal{B}}{P}$}} & = \mathbf{x}^\top \Bigl( \underset{\mathcal{B}\leftarrow\mathcal{E}}{P}\Bigr)^\top \left[\! \begin{array}{ccc} -1 & 0 & 0 \\ 0 & -1 & 0 \\ 0 & 0 & 5 \end{array} \!\right] \underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x} \\[5pt] \boxed{\text{properties of transpose}} & = \Bigl( \underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x}\Bigr)^\top \left[\! \begin{array}{ccc} -1 & 0 & 0 \\ 0 & -1 & 0 \\ 0 & 0 & 5 \end{array} \!\right] \underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x} \\[5pt] \boxed{\text{change of coordinates}} & = \mathbf{y}^\top \left[\! \begin{array}{ccc} -1 & 0 & 0 \\ 0 & -1 & 0 \\ 0 & 0 & 5 \end{array} \!\right] \mathbf{y} \\[5pt] & = \bigl[ y_1 \ \ y_2 \ \ y_3 \bigr] \left[\! \begin{array}{ccc} -1 & 0 & 0 \\ 0 & -1 & 0 \\ 0 & 0 & 5 \end{array} \!\right] \left[\! \begin{array}{c} y_1 \\ y_2 \\ y_3 \end{array} \!\right]\\[5pt] & = - y_1^2 - y_2^2 + 5 y_3^2. \end{align*} Clearly the quadratic form $- y_1^2 - y_2^2 + 5 y_3^2$ is an indefinite form taking the value $-1$ at $(y_1,y_2,y_3) = (1,0,0)$ and the value $5$ at $(y_1,y_2,y_3) = (0,0,1).$ Or, using the change of coordinates matrices, the quadratic form given in the Example takes the negative value $-2$ at $(x_1,x_2,x_3) = (-1,0,1)$ and the positive value $30$ at $(x_1,x_2,x_3) = (1,2,1).$

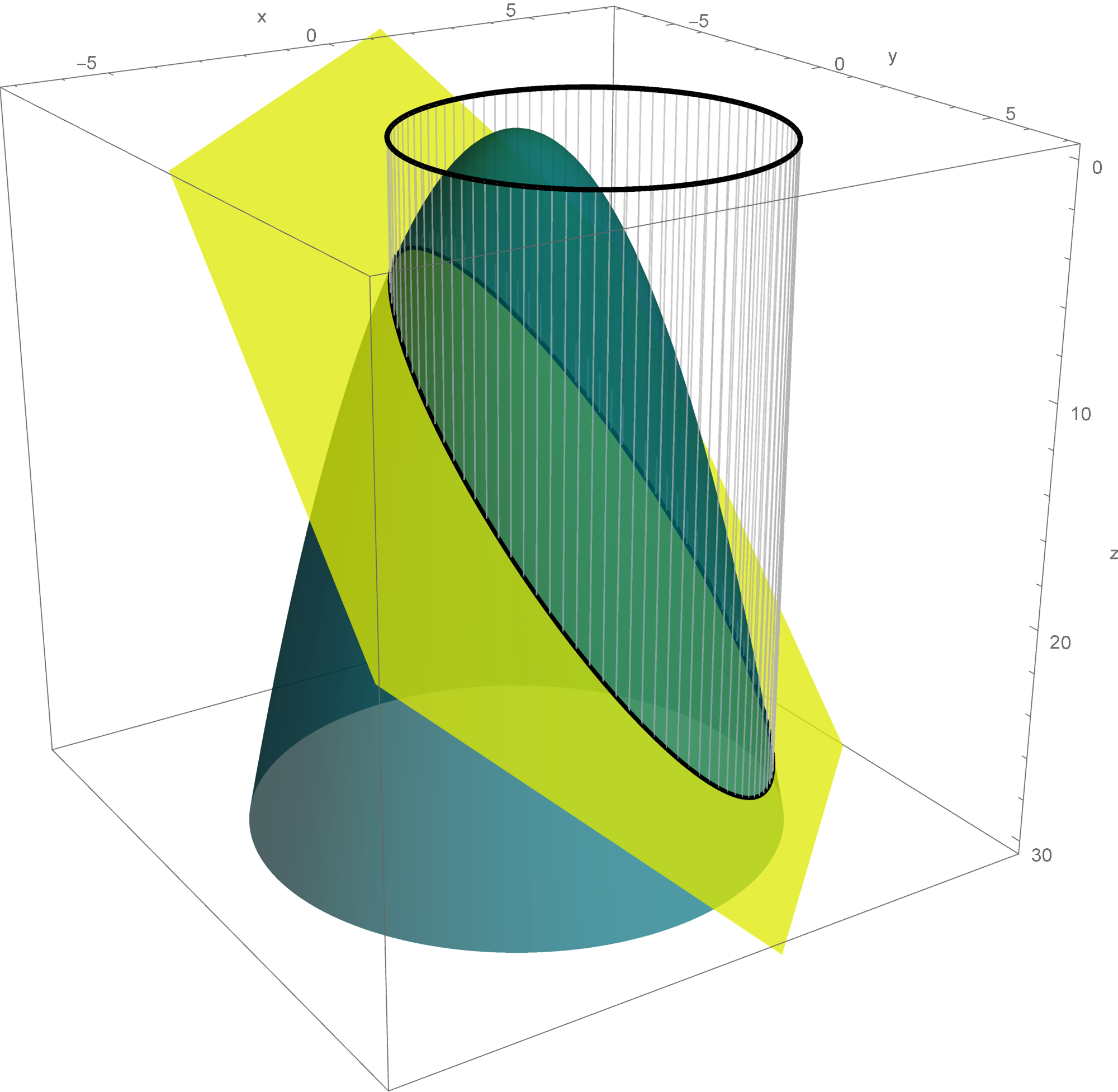

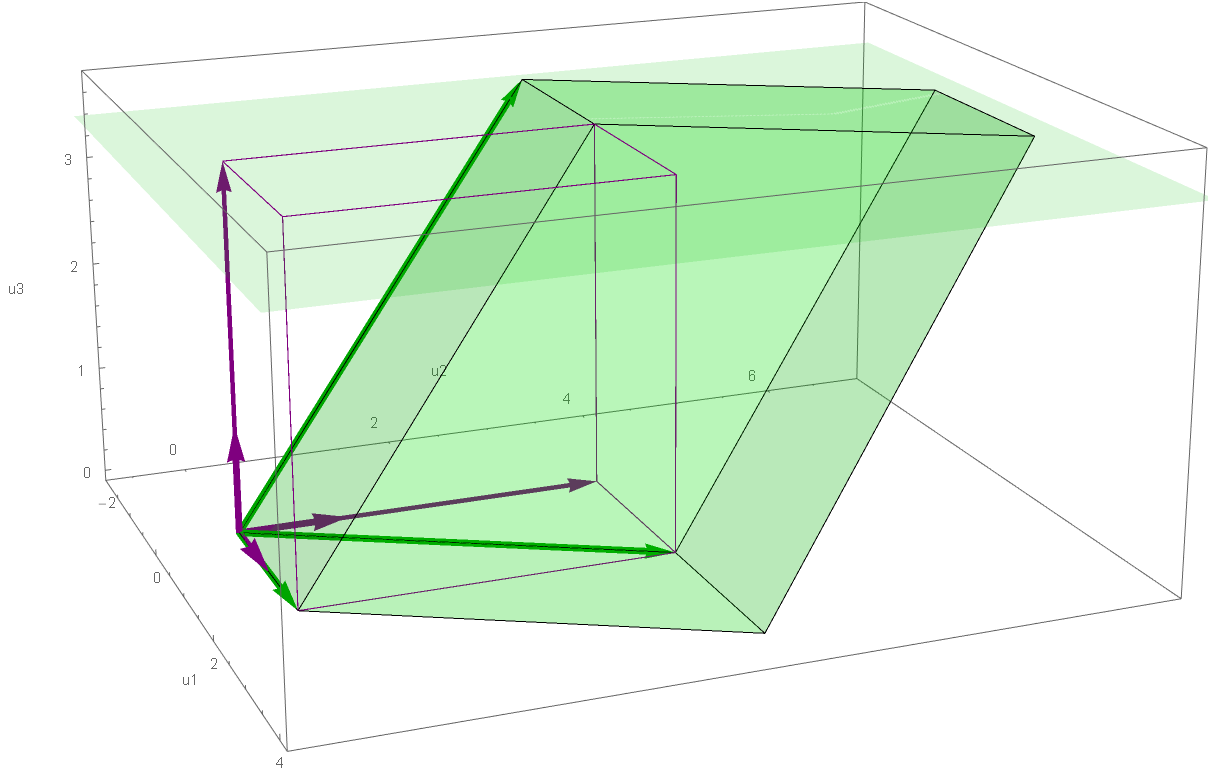

- T4(I)

The above introduced change of coordinates yields the following set equality

\[

\bigl\{ \mathbf{x} \in \mathbb{R}^3 \, : \, Q(\mathbf{x}) = c \bigr\} = \left\{ \mathbf{x} \in \mathbb{R}^3 \, : \bigl[ \mathbf{x}\bigr]_{\mathcal{B}} = \left[\!

\begin{array}{c} y_1 \\ y_2 \\ y_3\end{array}

\!\right] \quad \text{and} \quad \, - y_1^2 - y_2^2 + 5 y_3^2 = c \right\}

\]

which holds for each $c \in \mathbb{R}.$

Since the set \[ \bigl\{ \mathbf{y} \in \mathbb{R}^3 \, : \, - y_1^2 - y_2^2 + 5 y_3^2 = 0 \bigr\} \] is a rotated cone, the set equality stated at the beginning of this item with $c=0$ implies that the set \[ \bigl\{ \mathbf{x} \in \mathbb{R}^3 \, : \, Q(\mathbf{x}) = 0 \bigr\} \] is also a rotated cone. The intersection of this cone and the plane spanned by the vectors $\mathbf{u}_1$ and $\mathbf{u}_3$ is a pair of lines given by the equations \[ y_1 = \sqrt{5} y_3 \quad \text{and} \quad y_1 = -\sqrt{5} y_3. \] These lines are spanned, respectively, by the following vectors \[ \sqrt{5} \mathbf{u}_1 + \mathbf{u}_3 = \sqrt{5} \left[\! \begin{array}{c} -\frac{1}{\sqrt{2}} \\ 0 \\ \frac{1}{\sqrt{2}} \end{array} \!\right] + \left[\! \begin{array}{c} \frac{1}{\sqrt{6}} \\ \frac{2}{\sqrt{6}} \\ \frac{1}{\sqrt{6}} \end{array} \!\right] \] and \[ -\sqrt{5} \mathbf{u}_1 + \mathbf{u}_3 = -\sqrt{5} \left[\! \begin{array}{c} -\frac{1}{\sqrt{2}} \\ 0 \\ \frac{1}{\sqrt{2}} \end{array} \!\right] + \left[\! \begin{array}{c} \frac{1}{\sqrt{6}} \\ \frac{2}{\sqrt{6}} \\ \frac{1}{\sqrt{6}} \end{array} \!\right] \] Therefore, the cone is obtained by the rotation of the line spanned by the vector \[ \sqrt{5} \mathbf{u}_1 + \mathbf{u}_3 = \sqrt{5} \left[\! \begin{array}{c} -\frac{1}{\sqrt{2}} \\ 0 \\ \frac{1}{\sqrt{2}} \end{array} \!\right] + \left[\! \begin{array}{c} \frac{1}{\sqrt{6}} \\ \frac{2}{\sqrt{6}} \\ \frac{1}{\sqrt{6}} \end{array} \!\right] \] about the line spanned by the vector \[ \mathbf{u}_3 = \left[\! \begin{array}{c} \frac{1}{\sqrt{6}} \\ \frac{2}{\sqrt{6}} \\ \frac{1}{\sqrt{6}} \end{array} \!\right]. \]The reasoning here is the same as in a multivariable calculus course when we figure out the shape of the surface given by the equation \(-x^2-y^2+5z^2 = 0\), or \[ |z| = \frac{1}{\sqrt{5}} \sqrt{x^2+y^2}. \] This cone is obtained by rotating the line \(z = \frac{1}{\sqrt{5}}x, y = 0\) about the \(z\)-axes. Or, in the language of vectors, by rotating the line spanned by the vector \(\sqrt{5} \mathbf{i}+\mathbf{k}\) about the vector \(\mathbf{k}\).

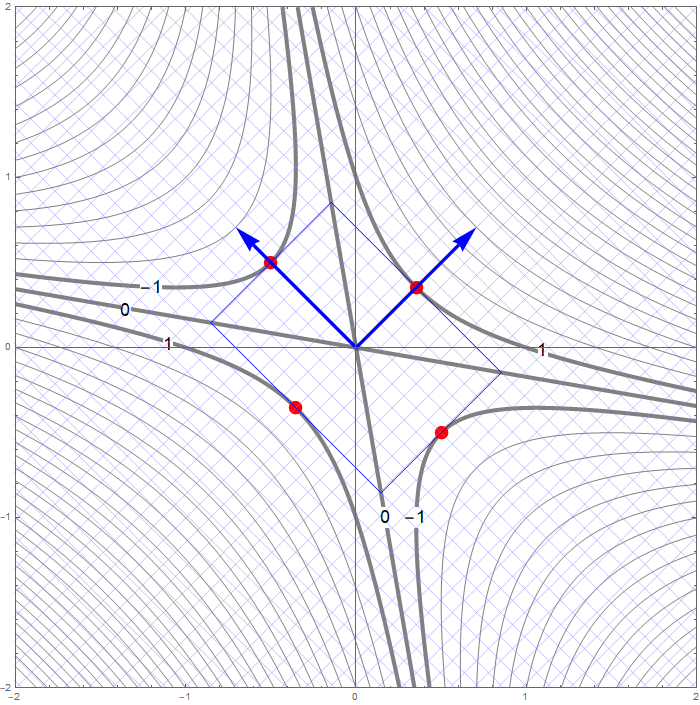

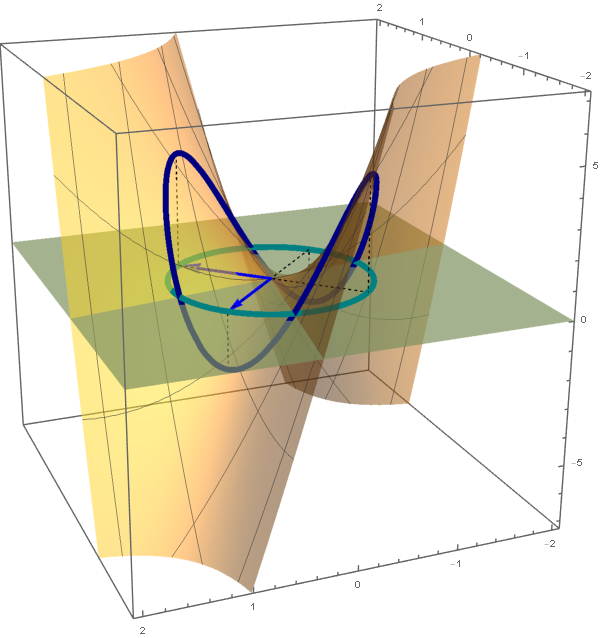

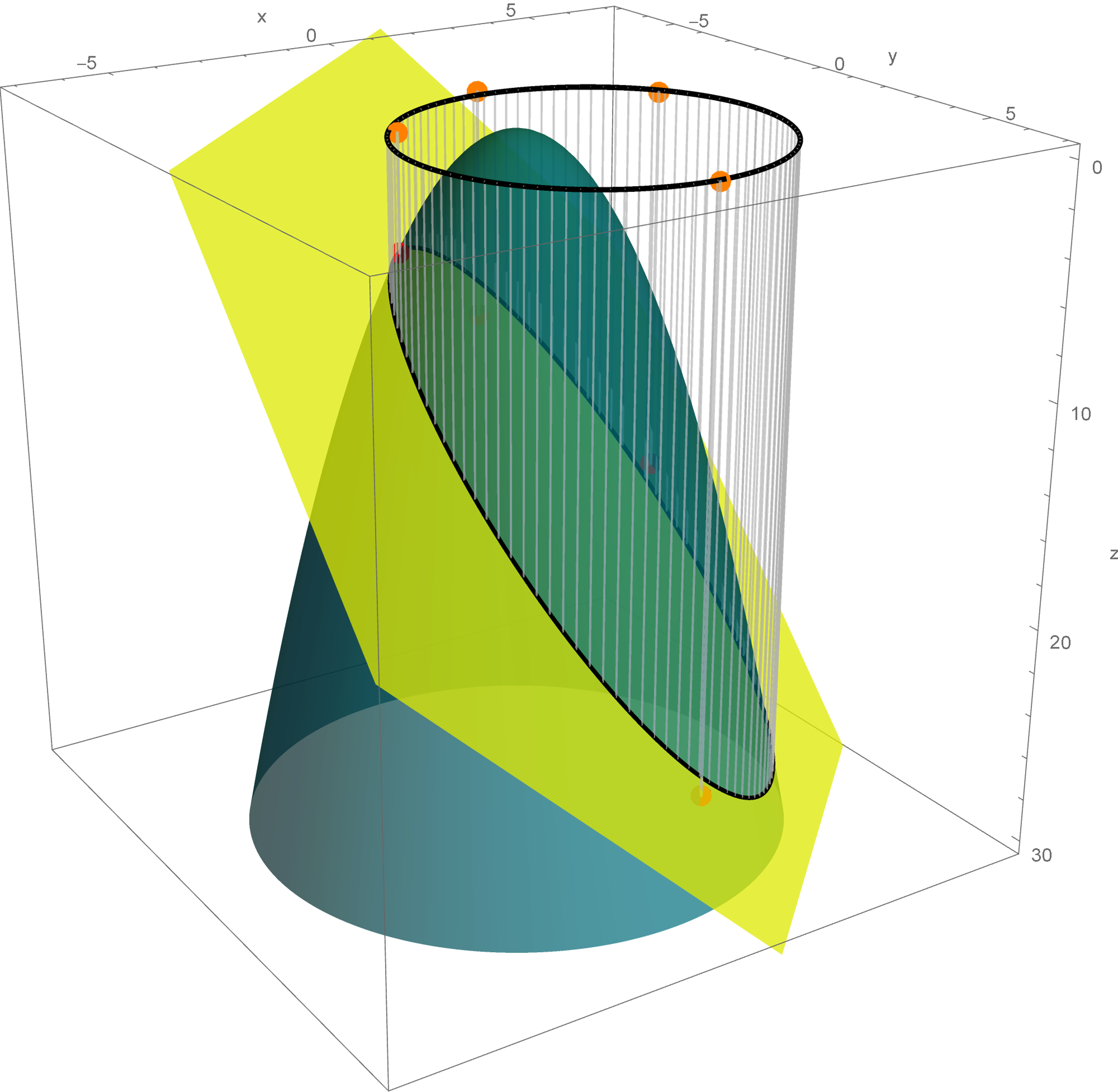

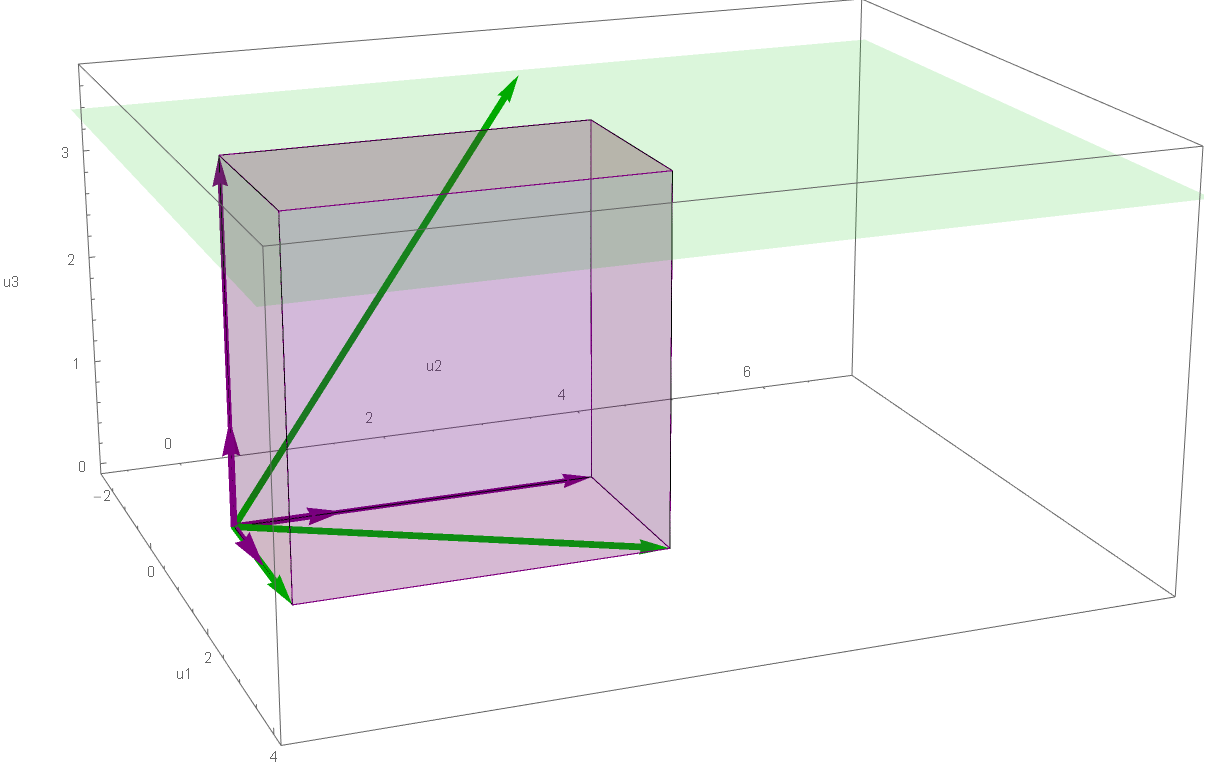

Below is a graph of the cone \[ \bigl\{ \mathbf{x} \in \mathbb{R}^3 \, : \, Q(\mathbf{x}) = 0 \bigr\} \] with the unit vectors \(\mathbf{u}_1, \mathbf{u}_2, \mathbf{u}_3\) in blue and the line spanned by the vector \[ \sqrt{5} \mathbf{u}_1 + \mathbf{u}_3 = \sqrt{5} \left[\! \begin{array}{c} -\frac{1}{\sqrt{2}} \\ 0 \\ \frac{1}{\sqrt{2}} \end{array} \!\right] + \left[\! \begin{array}{c} \frac{1}{\sqrt{6}} \\ \frac{2}{\sqrt{6}} \\ \frac{1}{\sqrt{6}} \end{array} \!\right] \] in red. It is interesting to identify the blue vector in the picture below which corresponds to \(\mathbf{u}_3\), since the rotation of the red line about this vector creates the cone. Notice that \(\mathbf{u}_3\) is the only blue vector with all three coordinates positive. Also notice that the positive directions of \(x_1\)-axis and \(x_2\)-axis point away from the observer.

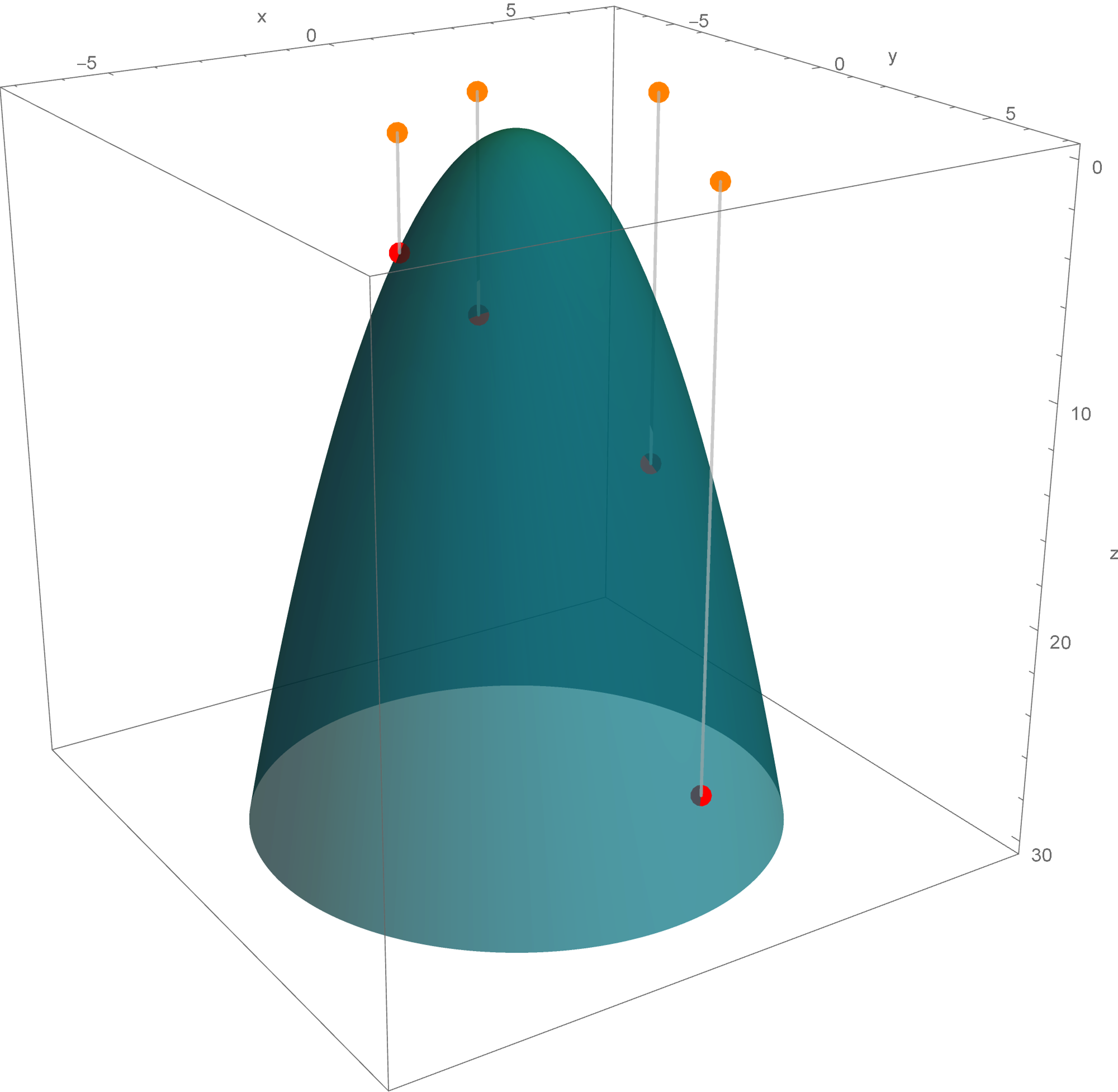

- T4(II)

The set

\[

\bigl\{ \mathbf{y} \in \mathbb{R}^3 \, : \, - y_1^2 - y_2^2 + 5 y_3^2 = 1 \bigr\}

\]

is a rotated two sheet hyperboloid. This two sheet hyperboloid is obtained as the hyperbola $-y_1^2 + 5 y_3^2 = 1, y_2 = 0$ rotates about the $y_3$-axis. Consequently, the set equality stated at the beginning of this item with $c=1$ implies that the set

\[

\bigl\{ \mathbf{x} \in \mathbb{R}^3 \, : \, Q(\mathbf{x}) = 1 \bigr\}

\]

is also a rotated two sheet hyperboloid. This hyperboloid is obtained as a hyperbola in the plane spanned by the vectors

\[

\mathbf{u}_1 = \left[\!

\begin{array}{c}

-\frac{1}{\sqrt{2}} \\ 0 \\ \frac{1}{\sqrt{2}}

\end{array}

\!\right], \quad \text{and} \quad

\mathbf{u}_3 = \left[\!

\begin{array}{c}

\frac{1}{\sqrt{6}} \\ \frac{2}{\sqrt{6}} \\ \frac{1}{\sqrt{6}}

\end{array}

\!\right]

\]

rotates about the vector

\[

\mathbf{u}_3 = \left[\!

\begin{array}{c}

\frac{1}{\sqrt{6}} \\ \frac{2}{\sqrt{6}} \\ \frac{1}{\sqrt{6}}

\end{array}

\!\right].

\]

In the picture below this hyperbola is colored red.

- T4(III)

The set

\[

\bigl\{ \mathbf{y} \in \mathbb{R}^3 \, : \, - y_1^2 - y_2^2 + 5 y_3^2 = -1 \bigr\}

\]

is a rotated one sheet hyperboloid. This hyperboloid is obtained as the hyperbola $-y_2^2 + 5 y_3^2 = -1, y_1 = 0$ rotates about $y_3$-axis. The set equality stated at the beginning of this item with $c=-1$ implies that the set

\[

\bigl\{ \mathbf{x} \in \mathbb{R}^3 \, : \, Q(\mathbf{x}) = -1 \bigr\}

\]

is also a rotated one sheet hyperboloid. This hyperboloid is obtained as a hyperbola in the plane spanned by the vectors

\[

\mathbf{u}_2 =

\left[\!

\begin{array}{c}

\frac{1}{\sqrt{3}} \\ -\frac{1}{\sqrt{3}} \\ \frac{1}{\sqrt{3}}

\end{array}

\!\right], \quad \text{and} \quad

\mathbf{u}_3 = \left[\!

\begin{array}{c}

\frac{1}{\sqrt{6}} \\ \frac{2}{\sqrt{6}} \\ \frac{1}{\sqrt{6}}

\end{array}

\!\right]

\]

rotates about the vector

\[

\mathbf{u}_3 =

\left[\!

\begin{array}{c}

\frac{1}{\sqrt{6}} \\ \frac{2}{\sqrt{6}} \\ \frac{1}{\sqrt{6}}

\end{array}

\!\right].

\]

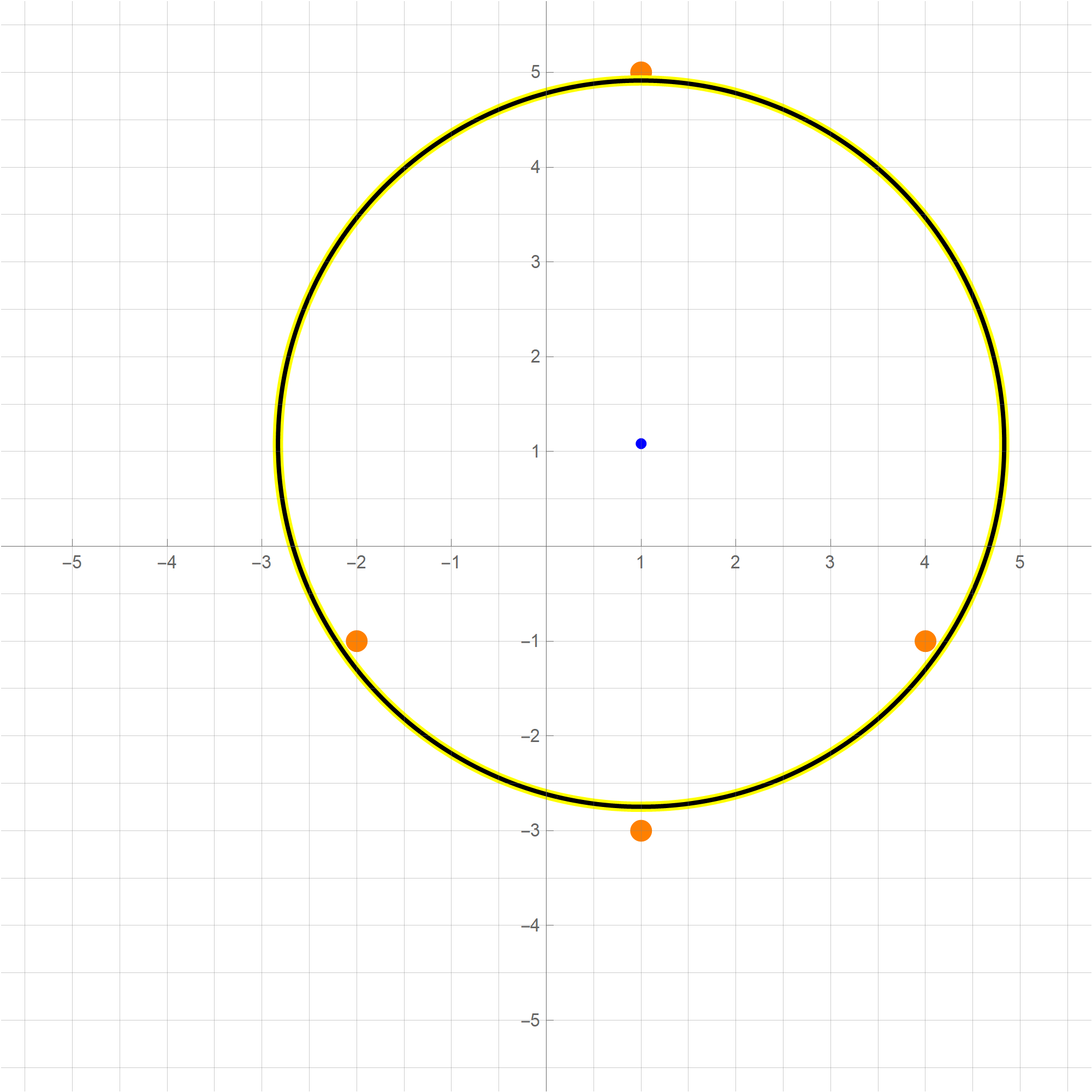

- T3 Since the change of coordinate matrices $\displaystyle \underset{\mathcal{E}\leftarrow\mathcal{B}}{P}$ and $\displaystyle \underset{\mathcal{B}\leftarrow\mathcal{E}}{P}$ are orthogonal we have \[ \| \mathbf{y} \|^2 = \mathbf{y}^\top \mathbf{y} = \left(\underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x}\right)^\top \left(\underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x}\right) = \mathbf{x}^\top \left(\underset{\mathcal{B}\leftarrow\mathcal{E}}{P}\right)^\top \underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x} = \mathbf{x}^\top \mathbf{x} = \|\mathbf{x} \|^2. \] Therefore \[ S = \bigl\{ Q(\mathbf{x}) \, : \, \mathbf{x} \in \mathbb{R}^3, \ \| \mathbf{x} \| = 1 \bigr\} = \bigl\{ - y_1^2 - y_2^2 + 5 y_3^2 \, : \, \mathbf{y} = 1, \ \mathbf{y} \in\mathbb{R}^3 \bigr\}. \] Since whenever $y_1^2 + y_2^2 + y_3^2 = 1$ we have \[ -1 = -1 y_1^2 - 1 y_2^2 - 1 y_3^2 \leq - y_1^2 - y_2^2 + 5 y_3^2 \leq 5 y_1^2 + 5 y_2^2 + 5 y_3^2 = 5 \] wededuce that $\min S = -1$ and $\max S = 5$. The form $- y_1^2 - y_2^2 + 5 y_3^2$ takes the value $-1$ when $(y_1,y_2,y_3) = (\cos \theta, \sin \theta, 0)$ and the value $5$ when $(y_1,y_2,y_3) = (0,0,1)$ or $(y_1,y_2,y_3) = (0,0,-1)$. Using the change of coordinates matrix $\displaystyle \underset{\mathcal{E}\leftarrow\mathcal{B}}{P}$ we conclude that: The minimum value $-1$ is taken at the circle on the unit sphere in $\mathbb{R}^3$. That is \begin{align*} \bigl\{ \mathbf{x} \in \mathbb{R}^3 \, : \, Q(\mathbf{x}) = -1 \bigr\} & = \bigl\{(\cos \theta) \mathbf{u}_1 + (\sin \theta) \mathbf{u}_2 \ : \ \theta \in [0, 2\pi) \bigr\} \\ & = \left\{ (\cos \theta) \left[\! \begin{array}{c} -\frac{1}{\sqrt{2}} \\ 0 \\ \frac{1}{\sqrt{2}} \end{array} \!\right] + (\sin \theta) \left[\! \begin{array}{c} \frac{1}{\sqrt{3}} \\ -\frac{1}{\sqrt{3}} \\ \frac{1}{\sqrt{3}} \end{array} \!\right] \ : \ \theta \in [0, 2\pi) \right\}. \end{align*} The situation with the maximum value $5$ is simpler, this value is taken at two vectors: \[ \bigl\{ \mathbf{x} \in \mathbb{R}^3 \, : \, Q(\mathbf{x}) = 5 \bigr\} = \{ \mathbf{u}_3, - \mathbf{u}_3 \} = \left\{\left[\! \begin{array}{c} \frac{1}{\sqrt{6}} \\ \frac{2}{\sqrt{6}} \\ \frac{1}{\sqrt{6}} \end{array} \!\right], - \left[\! \begin{array}{c} \frac{1}{\sqrt{6}} \\ \frac{2}{\sqrt{6}} \\ \frac{1}{\sqrt{6}} \end{array} \!\right] \right\}. \]

-

Example 5.

In this item we consider the quadratic form

\begin{align*}

Q(x_1, x_2, x_3) & = 2 x_1^2+2 x_1 x_2 +2 x_1 x_3 +2 x_2^2 + 2 x_2 x_3 + 2 x_3^2 \\

& \qquad \qquad \text{where} \quad x_1, x_2, x_3 \in \mathbb{R}.

\end{align*}

- T1 We have \begin{align*} Q(x_1, x_2, x_3) & = \bigl[ x_1 \ \ x_2 \ \ x_3 \bigr] \left[\! \begin{array}{ccc} 2 & 1 & 1 \\ 1 & 2 & 1 \\ 1 & 1 & 2 \end{array} \!\right] \left[\! \begin{array}{c} x_1 \\ x_2 \\ x_3 \end{array} \!\right] \\ & = 2 x_1^2+2 x_1 x_2 +2 x_1 x_3 +2 x_2^2 + 2 x_2 x_3 + 2 x_3^2 \\ & \qquad \text{where} \quad x_1, x_2, x_3 \in \mathbb{R}. \end{align*}

- T2 Clearly the quadratic form $Q$ is not a zero form. To classify $Q$ as positive semidefinite, negative semidefinite, indefinite we orthogonally diagonalize the matrix of this quadratic form: \[ \left[\! \begin{array}{ccc} 2 & 1 & 1 \\ 1 & 2 & 1 \\ 1 & 1 & 2 \end{array} \!\right] = \left[\!\begin{array}{ccc} \frac{1}{\sqrt{2}} & \frac{1}{\sqrt{6}} & \frac{1}{\sqrt{3}} \\ 0 & -\frac{2}{\sqrt{6}} & \frac{1}{\sqrt{3}} \\ -\frac{1}{\sqrt{2}} & \frac{1}{\sqrt{6}} & \frac{1}{\sqrt{3}} \\ \end{array} \!\right] \left[\! \begin{array}{ccc} 1 & 0 & 0 \\ 0 & 1 & 0 \\ 0 & 0 & 4 \end{array} \!\right] \left[\!\begin{array}{ccc} \frac{1}{\sqrt{2}} & \frac{1}{\sqrt{6}} & \frac{1}{\sqrt{3}} \\ 0 & -\frac{2}{\sqrt{6}} & \frac{1}{\sqrt{3}} \\ -\frac{1}{\sqrt{2}} & \frac{1}{\sqrt{6}} & \frac{1}{\sqrt{3}} \\ \end{array} \!\right]^\top \] Let us introduce two bases \[ \mathcal{B} = \left\{ \left[\! \begin{array}{c} \frac{1}{\sqrt{2}} \\ 0 \\ -\frac{1}{\sqrt{2}} \end{array} \!\right], \left[\! \begin{array}{c} \frac{1}{\sqrt{6}} \\ -\frac{2}{\sqrt{6}} \\ \frac{1}{\sqrt{6}} \end{array} \!\right], \left[\! \begin{array}{c} \frac{1}{\sqrt{3}} \\ \frac{1}{\sqrt{3}} \\ \frac{1}{\sqrt{3}} \end{array} \!\right] \right\} \qquad \text{and} \qquad \mathcal{E} = \left\{ \left[\! \begin{array}{c} 1 \\ 0 \\ 0 \end{array} \!\right], \left[\! \begin{array}{c} 0 \\ 1 \\ 0 \end{array} \!\right], \left[\! \begin{array}{c} 0 \\ 0 \\ 1 \end{array} \!\right] \right\}. \] The above orthogonal diagonalization suggests a very useful change of coordinates: \[ \mathbf{y} = \left[\!\begin{array}{ccc} \frac{1}{\sqrt{2}} & \frac{1}{\sqrt{6}} & \frac{1}{\sqrt{3}} \\ 0 & -\frac{2}{\sqrt{6}} & \frac{1}{\sqrt{3}} \\ -\frac{1}{\sqrt{2}} & \frac{1}{\sqrt{6}} & \frac{1}{\sqrt{3}} \\ \end{array} \!\right]^\top \mathbf{x} = \underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x}, \qquad \mathbf{x} = \left[\!\begin{array}{ccc} \frac{1}{\sqrt{2}} & \frac{1}{\sqrt{6}} & \frac{1}{\sqrt{3}} \\ 0 & -\frac{2}{\sqrt{6}} & \frac{1}{\sqrt{3}} \\ -\frac{1}{\sqrt{2}} & \frac{1}{\sqrt{6}} & \frac{1}{\sqrt{3}} \\ \end{array} \!\right] \mathbf{y} = \underset{\mathcal{E}\leftarrow\mathcal{B}}{P} \mathbf{y}. \] The vector $\mathbf{y}$ is the coordinate vector of $\mathbf{x}$ relative to the basis $\mathcal{B}$, that is $\mathbf{y} = \bigl[\mathbf{x}\bigr]_{\mathcal{B}}.$ With the change of coordinates \[ \mathbf{y} = \underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x}, \qquad \mathbf{x} = \underset{\mathcal{E}\leftarrow\mathcal{B}}{P} \mathbf{y}, \] the quadratic form $Q$ simplifies as follows \[ 2 x_1^2+2 x_1 x_2 +2 x_1 x_3 +2 x_2^2 + 2 x_2 x_3 + 2 x_3^2 = y_1^2 + y_2^2 + 4 y_3^2. \] The quadratic form $y_1^2 + y_2^2 + 4 y_3^2$ is positive definite since $y_1^2 + y_2^2 + 4 y_3^2 \geq 0$ for all $y_1, y_2, y_3 \in \mathbb{R}$ and $y_1^2 + y_2^2 + 4 y_3^2 = 0$ implies $(y_1,y_2,y_3) = (0,0,0).$ Therefore the given quadratic form $Q(\mathbf{x})$ is also positive definite.

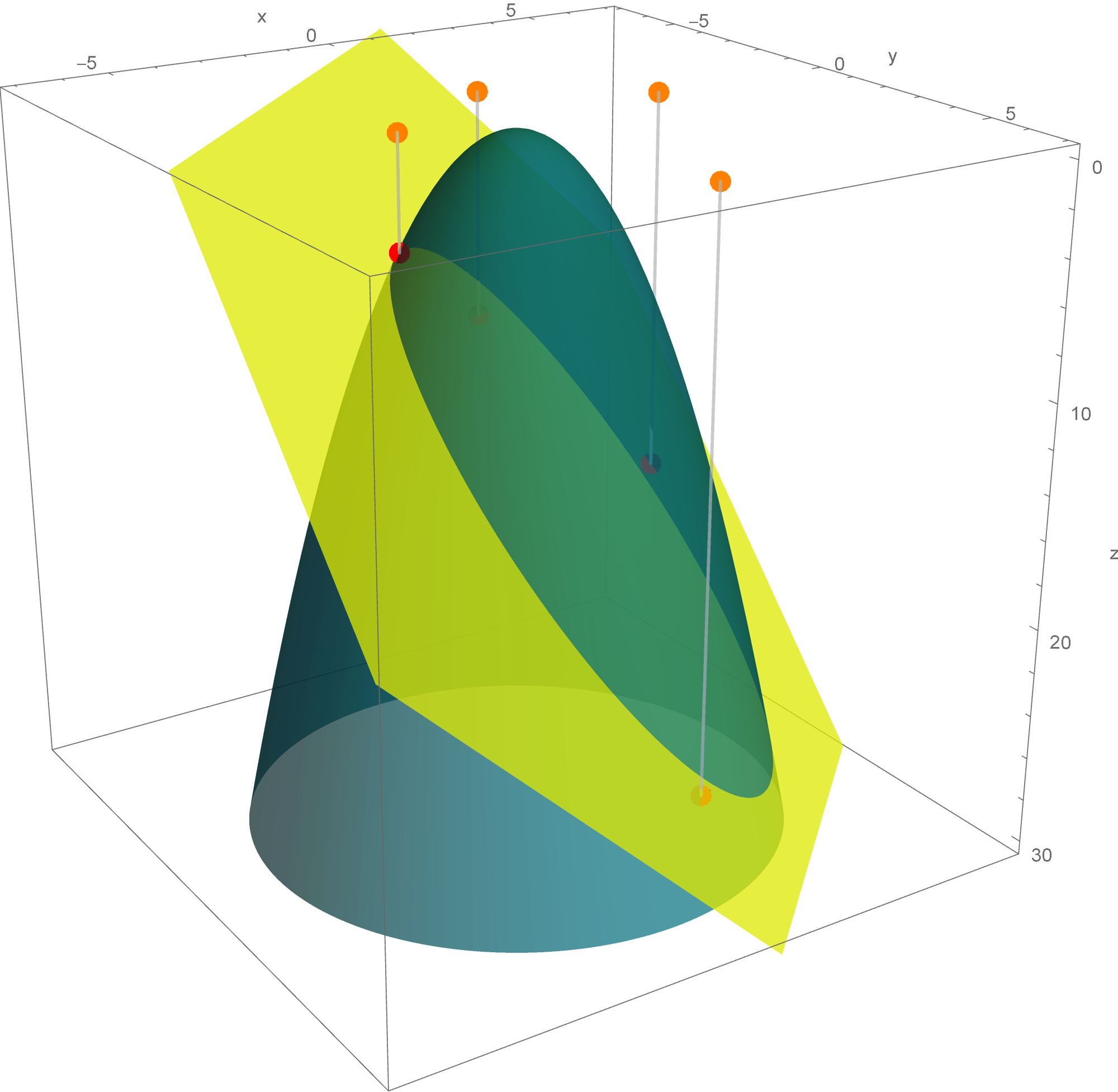

- T4(I) The above introduced change of coordinates yields the following set equality \[ \bigl\{ \mathbf{x} \in \mathbb{R}^3 \, : \, Q(\mathbf{x}) = c \bigr\} = \left\{ \mathbf{x} \in \mathbb{R}^3 \, : \bigl[ \mathbf{x}\bigr]_{\mathcal{B}} = \left[\! \begin{array}{c} y_1 \\ y_2 \\ y_3\end{array} \!\right] \quad \text{and} \quad \, y_1^2 + y_2^2 + 4 y_3^2 = c \right\} \] which holds for each $c \in \mathbb{R}.$ Since \[ \bigl\{ \mathbf{y} \in \mathbb{R}^3 \, : \, y_1^2 + y_2^2 + 4 y_3^2 = -1 \bigr\} \] is the empty set, the stated set equality with $c = -1$ yields that \[ \bigl\{ \mathbf{x} \in \mathbb{R}^3 \, : \, Q(\mathbf{x}) = -1 \bigr\} \] is the empty set.

- T4(II) Since the set \[ \bigl\{ \mathbf{y} \in \mathbb{R}^3 \, : \, y_1^2 + y_2^2 + 4 y_3^2 = 0 \bigr\} \] is a singleton set consisting of the zero vector, the stated set equality with $c = 0$ yields that \[ \bigl\{ \mathbf{x} \in \mathbb{R}^3 \, : \, Q(\mathbf{x}) = 0 \bigr\} \] is a singleton set consisting of the zero vector.

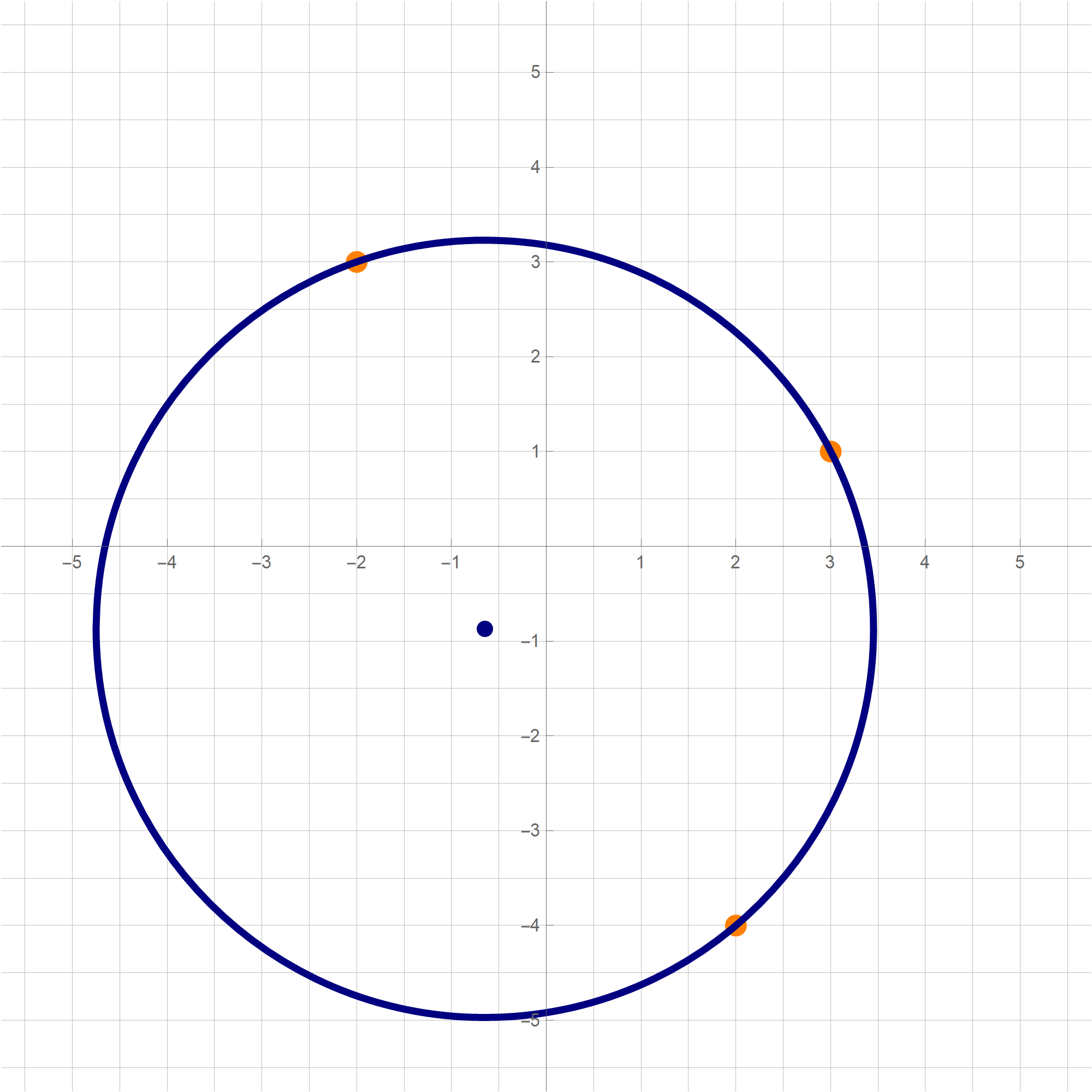

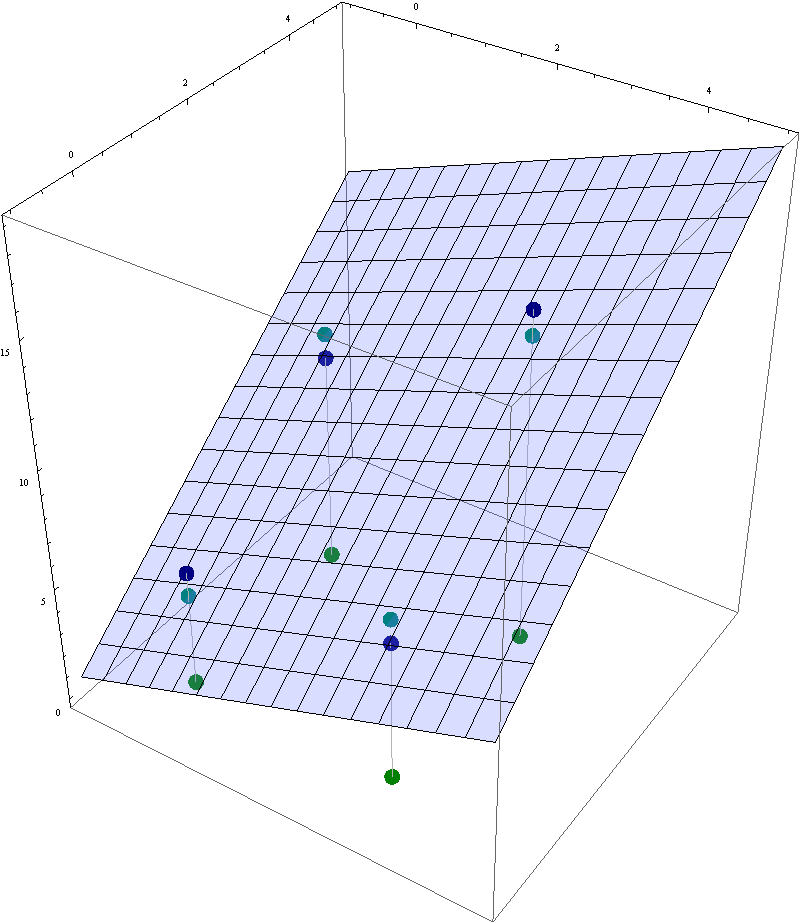

- T4(III)

The set

\[

\bigl\{ \mathbf{y} \in \mathbb{R}^3 \, : \, y_1^2 + y_2^2 + 4 y_3^2 = 1 \bigr\}

\]

is a rotated ellipsoid. This ellipsoid is obtained as the ellipse

\[

\bigl\{ \mathbf{y} \in \mathbb{R}^3 \, : \, y_1^2 + 4 y_3^2 = 1, \ y_2 = 0 \bigr\}

= \left\{ \left[\!

\begin{array}{c} \cos\theta \\ 0 \\ \frac{1}{2} \sin\theta \end{array}

\!\right] \ : \ \theta \in [0, 2 \pi) \right\},

\]

which is in the $y_1y_3$-plane, rotates about the $y_3$-axis. The set equality stated at the beginning of this item with $c = 1$ yields that the set

\[

\bigl\{ \mathbf{x} \in \mathbb{R}^3 \ : \ Q(\mathbf{x}) = 1 \bigr\}

\]

is also a rotated ellipsoid obtained as the ellipse

\[

\left\{

(\cos\theta)\left[\!

\begin{array}{c}

\frac{1}{\sqrt{2}} \\ 0 \\ -\frac{1}{\sqrt{2}}

\end{array}

\!\right] + \frac{1}{2} (\sin\theta )

\left[\!

\begin{array}{c}

\frac{1}{\sqrt{3}} \\ \frac{1}{\sqrt{3}} \\ \frac{1}{\sqrt{3}}

\end{array}

\!\right]

\ : \ \theta \in [0, 2 \pi) \right\},

\]

which is in the plane spanned by the vectors

\[

\left[\!

\begin{array}{c}

\frac{1}{\sqrt{2}} \\ 0 \\ -\frac{1}{\sqrt{2}}

\end{array}

\!\right], \quad \left[\!

\begin{array}{c}

\frac{1}{\sqrt{3}} \\ \frac{1}{\sqrt{3}} \\ \frac{1}{\sqrt{3}}

\end{array}

\!\right],

\]

rotates about the line determined by the vector $\displaystyle \left[\!

\begin{array}{c}

\frac{1}{\sqrt{3}} \\ \frac{1}{\sqrt{3}} \\ \frac{1}{\sqrt{3}}

\end{array}

\!\right].$ Notice that the intersection of this ellipsoid and the plane spanned by the vectors

\[

\left[\!

\begin{array}{c}

\frac{1}{\sqrt{2}} \\ 0 \\ -\frac{1}{\sqrt{2}}

\end{array}

\!\right] \quad \text{and} \quad \left[\!

\begin{array}{c}

\frac{1}{\sqrt{6}} \\ -\frac{2}{\sqrt{6}} \\ \frac{1}{\sqrt{6}}

\end{array}

\!\right]

\]

is the unit circle

\[

\left\{ (\cos\theta) \left[\!

\begin{array}{c}

\frac{1}{\sqrt{2}} \\ 0 \\ -\frac{1}{\sqrt{2}}

\end{array}

\!\right] + (\sin\theta) \left[\!

\begin{array}{c}

\frac{1}{\sqrt{6}} \\ -\frac{2}{\sqrt{6}} \\ \frac{1}{\sqrt{6}}

\end{array}

\!\right] \ : \ \theta \in [0, 2 \pi) \right\}.

\]

- T3 Since the change of coordinate matrices $\displaystyle \underset{\mathcal{E}\leftarrow\mathcal{B}}{P}$ and $\displaystyle \underset{\mathcal{B}\leftarrow\mathcal{E}}{P}$ are orthogonal matrices, we have \[ \| \mathbf{y} \|^2 = \mathbf{y}^\top \mathbf{y} = \left(\underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x}\right)^\top \left(\underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x}\right) = \mathbf{x}^\top \left(\underset{\mathcal{B}\leftarrow\mathcal{E}}{P}\right)^\top \underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x} = \mathbf{x}^\top \mathbf{x} = \|\mathbf{x} \|^2. \] Therefore \[ S = \bigl\{ Q(\mathbf{x}) \, : \, \mathbf{x} \in \mathbb{R}^3, \ \| \mathbf{x} \| = 1 \bigr\} = \bigl\{y_1^2 + y_2^2 + 4 y_3^2 \, : \, \| \mathbf{y} \| = 1, \ \mathbf{y} \in\mathbb{R}^3 \bigr\}. \] Since whenever $y_1^2 + y_2^2 + y_3^2 = 1$ we have \[ 1 = y_1^2 + y_2^2 + y_3^2 \leq y_1^2 + y_2^2 + 4 y_3^2 \leq 4 y_1^2 + 4 y_2^2 + 4 y_3^2 = 4, \] we deduce that $\min S = 1$ and $\max S = 4$. The form $y_1^2 + y_2^2 + 4 y_3^2$ takes the value $1$ when $(y_1,y_2,y_3) = (\cos \theta, \sin \theta, 0)$ and the value $4$ when $(y_1,y_2,y_3) = (0,0,1)$ or $(y_1,y_2,y_3) = (0,0,-1)$. Using the change of coordinates matrix $\displaystyle \underset{\mathcal{E}\leftarrow\mathcal{B}}{P}$ we conclude that the minimum value, $1$, of $S$ is taken at the circle on the unit sphere in $\mathbb{R}^3$ given by \[ \left\{ (\cos\theta) \left[\! \begin{array}{c} \frac{1}{\sqrt{2}} \\ 0 \\ -\frac{1}{\sqrt{2}} \end{array} \!\right] + (\sin\theta) \left[\! \begin{array}{c} \frac{1}{\sqrt{6}} \\ -\frac{2}{\sqrt{6}} \\ \frac{1}{\sqrt{6}} \end{array} \!\right] \ : \ \theta \in [0, 2 \pi) \right\}. \] The situation with the maximum value $4$ of $S$ is simpler; this value is taken at two vectors: \[ \bigl\{ \mathbf{x} \in \mathbb{R}^3 \, : \, Q(\mathbf{x}) = 5 \bigr\} = \left\{\left[\! \begin{array}{c} \frac{1}{\sqrt{3}} \\ \frac{1}{\sqrt{3}} \\ \frac{1}{\sqrt{3}} \end{array} \!\right], - \left[\! \begin{array}{c} \frac{1}{\sqrt{3}} \\ \frac{1}{\sqrt{3}} \\ \frac{1}{\sqrt{3}} \end{array} \!\right] \right\}. \]

- Suggested problems for Section 7.3: 1, 3, 5, 9, 11, 12

-

When studying a specific quadratic form $Q$ we need to perform the following four Tasks:

- T1 Express the quadratic form $Q$ using a symmetric matrix $A$ as $Q(\mathbf{x}) = \mathbf{x}^\top\!A \mathbf{x}$ where $\mathbf{x}\in \mathbb{R}^n.$

- T2 Classify $Q$ based on the quadruplicity introduced in the post on Thursday, November 21, 2024: positive semidefinite, negative semidefinite, indefinite. Specify whether the form is positive definite or negative definite if applicable.

- T3 Solve the following constrained optimization problem: Consider the set of real numbers \[ S = \bigl\{ Q(\mathbf{x}) \, : \ \mathbf{x}\in \mathbb{R}^n, \ \ \| \mathbf{x} \| = 1 \bigr\}. \] Determine $\min S$ and $\max S$, and describe the sets: \[ \bigl\{ \mathbf{x} \in \mathbb{R}^n \, : \ \| \mathbf{x} \| = 1, \ \ Q(\mathbf{x}) = \min S \bigr\}, \qquad \bigl\{ \mathbf{x} \in \mathbb{R}^n \, : \ \| \mathbf{x} \| = 1, \ \ Q(\mathbf{x}) = \max S \bigr\}. \]

- T4 For \(n \in \{2,3\}\), provide a detailed description of the sets: \[ \bigl\{ \mathbf{x} \in \mathbb{R}^n \, : \, Q(\mathbf{x}) = -1 \bigr\}, \quad \bigl\{ \mathbf{x} \in \mathbb{R}^n \, : \, Q(\mathbf{x}) = 0 \bigr\}, \quad \bigl\{ \mathbf{x} \in \mathbb{R}^n \, : \, Q(\mathbf{x}) = 1 \bigr\}. \]

-

To perform Task T4 for \(n = 2\) it is essential to understand the curves: \[ x_1^2 + x_2^2 = 1, \quad x_1^2 + x_2^2 = 0, \quad x_1^2 + x_2^2 = -1, \] and \[ x_1^2 - x_2^2 = 1, \quad x_1^2 - x_2^2 = 0, \quad x_1^2 - x_2^2 = -1, \] and how these curves change when there are coefficients in front of the squares \(x_1^2\) and \(x_2^2\).

To perform Task T4 for \(n = 3\) it is essential to understand the surfaces: \[ x_1^2 + x_2^2 + x_3^2 = 1, \quad x_1^2 + x_2^2 + x_3^2 = 0, \quad x_1^2 + x_2^2 + x_3^2 = -1, \] and \[ x_1^2 + x_2^2 - x_3^2 = 1, \quad x_1^2 + x_2^2 - x_3^2 = 0, \quad x_1^2 + x_2^2 - x_3^2 = -1, \] and how these curves change when there are coefficients in front of the squares \(x_1^2\), \(x_2^2\), and \(x_3^2\); in particular when one of the coefficients is \(0\).

-

In Section 7.2 in the book the author does not discus quadratic forms with three variables. Here are some animations that might help you understand the shape of the surface which is a graph of the level surface of the quadratic form $x_1^2 + x_2^2 - x_3^2$. Here I show the surfaces in ${\mathbb R}^3$ with equations $x_1^2 + x_2^2 - x_3^2 = c$ for different values of $c$. These surfaces are called hyperboloids. You can read more at the Wikipedia

Hyperboloid page. One sheet hyperboloids are often encountered in art, see these Wikipedia pages Hyperboloid structure and list of hyperboloid structures, do not miss the Gallery at the bottom of the last Wikipedia page.

Place the cursor over the image to start the animation.

Five of the above level surfaces at different level of opacity.

- In the post on Friday, November 22, I performed Tasks T1, T2, and T3 for one of the most popular quadratic forms in \(\mathbb{R}^4\): the determinant of a \(2\times 2\) matrix \[ \det \begin{bmatrix} a & b \\ c & d \end{bmatrix} = ad - bc, \quad a,b,c,d \in \mathbb{R}. \]

- Below are two examples where I perform Tasks T1, T2, T3, and T4 for quadratic forms on \(\mathbb{R}^2\).

-

Example 1.

In this item we consider the quadratic form

\[

Q(x_1,x_2) = 6 x_1^2 - 4 x_2 x_1 + 3 x_2^2 \quad \text{where} \quad x_1, x_2 \in \mathbb{R}.

\]

- T1 We have \[ Q(x_1,x_2) = \bigl[ x_1 \ \ x_2 \bigr] \left[\! \begin{array}{cc} 6 & -2 \\ -2 & 3 \end{array} \!\right] \left[\! \begin{array}{c} x_1 \\ x_2 \end{array} \!\right] = 6 x_1^2 - 4 x_2 x_1 + 3 x_2^2 \quad \text{where} \quad x_1, x_2 \in \mathbb{R}. \]

- T2

Clearly the quadratic form $Q$ is not a zero form. To classify $Q$ as positive semidefinite, negative semidefinite, indefinite we orthogonally diagonalize the matrix of this quadratic form:

\[

\left[\!

\begin{array}{cc}

6 & -2 \\

-2 & 3

\end{array}

\!\right] = \left[\!

\begin{array}{cc}

\frac{1}{\sqrt{5}} & -\frac{2}{\sqrt{5}} \\

\frac{2}{\sqrt{5}} & \frac{1}{\sqrt{5}}

\end{array}

\!\right] \left[\!

\begin{array}{cc}

2 & 0 \\

0 & 7

\end{array}

\!\right] \left[\!

\begin{array}{cc}

\frac{1}{\sqrt{5}} & -\frac{2}{\sqrt{5}} \\

\frac{2}{\sqrt{5}} & \frac{1}{\sqrt{5}}

\end{array}

\!\right]^\top

\]

Let us introduce two bases

\[

\mathcal{B} = \left\{ \left[\!

\begin{array}{c}

\frac{1}{\sqrt{5}} \\

\frac{2}{\sqrt{5}}

\end{array}

\!\right], \left[\!

\begin{array}{c}

-\frac{2}{\sqrt{5}} \\

\frac{1}{\sqrt{5}}

\end{array}

\!\right] \right\} \qquad \text{and} \qquad

\mathcal{E} = \left\{ \left[\!

\begin{array}{c} 1 \\ 0 \end{array}

\!\right], \left[\!

\begin{array}{c}

0 \\ 1

\end{array}

\!\right] \right\}.

\]

The above orthogonal diagonalization suggests a very useful change of coordinates:

\[

\mathbf{y} = \left[\!

\begin{array}{cc}

\frac{1}{\sqrt{5}} & -\frac{2}{\sqrt{5}} \\

\frac{2}{\sqrt{5}} & \frac{1}{\sqrt{5}}

\end{array}

\!\right]^\top \mathbf{x} = \underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x}, \qquad

\mathbf{x} = \left[\!

\begin{array}{cc}

\frac{1}{\sqrt{5}} & -\frac{2}{\sqrt{5}} \\

\frac{2}{\sqrt{5}} & \frac{1}{\sqrt{5}}

\end{array}

\!\right] \mathbf{y} = \underset{\mathcal{E}\leftarrow\mathcal{B}}{P} \mathbf{y}.

\]

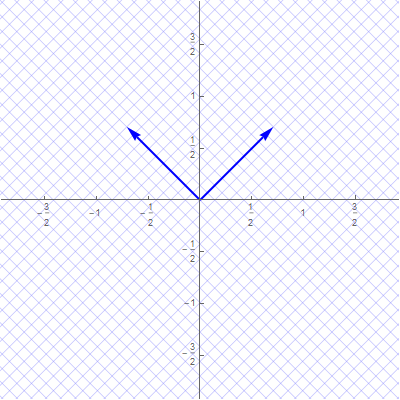

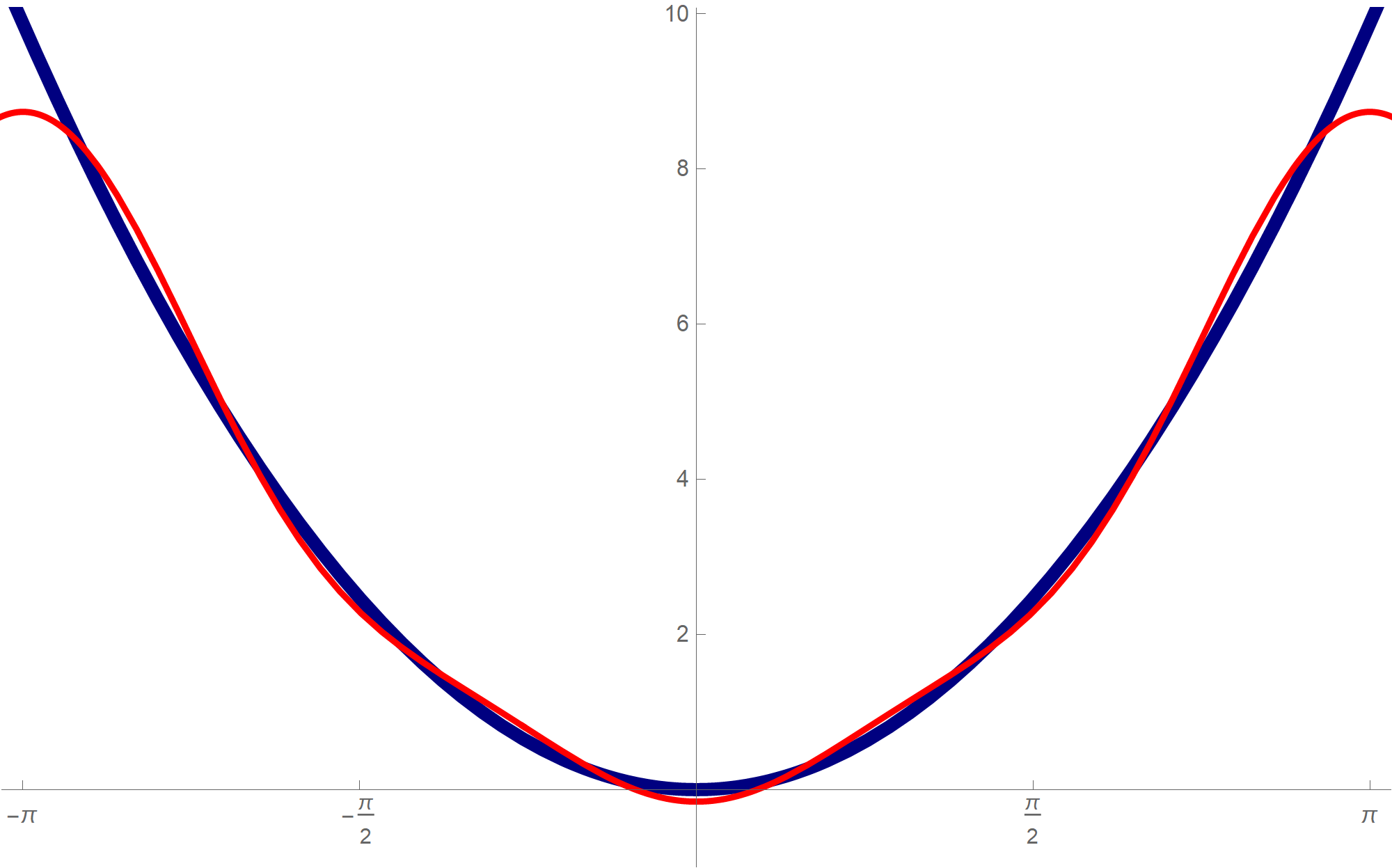

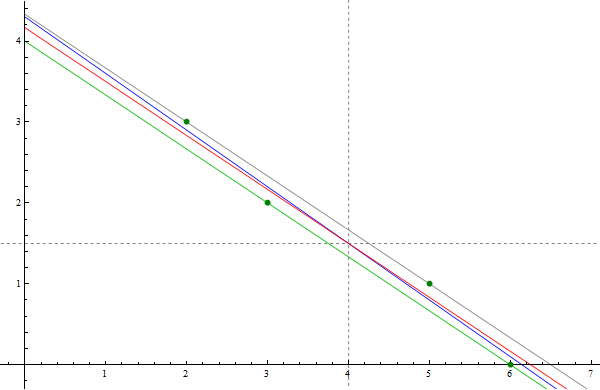

The coordinates $\mathbf{y}$ are the coordinates relative to the basis $\mathcal{B}$ which consists of the blue vectors in the next image

With the change of coordinates

\[

\mathbf{y} = \underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x}, \qquad

\mathbf{x} = \underset{\mathcal{E}\leftarrow\mathcal{B}}{P} \mathbf{y},

\]

the quadratic form $Q$ simplifies as follows

\[

6 x_1^2 - 4 x_2 x_1 + 3 x_2^2 = 2 y_1^2 + 7 y_2^2.

\]

Clearly $2 y_1^2 + 7 y_2^2 \geq 0$ for all $y_1, y_2 \in \mathbb{R}$ and $2 y_1^2 + 7 y_2^2 = 0$ if and only if $(y_1,y_2) =(0,0).$ Therefore, the given quadratic form is positive definite.

With the change of coordinates

\[

\mathbf{y} = \underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x}, \qquad

\mathbf{x} = \underset{\mathcal{E}\leftarrow\mathcal{B}}{P} \mathbf{y},

\]

the quadratic form $Q$ simplifies as follows

\[

6 x_1^2 - 4 x_2 x_1 + 3 x_2^2 = 2 y_1^2 + 7 y_2^2.

\]

Clearly $2 y_1^2 + 7 y_2^2 \geq 0$ for all $y_1, y_2 \in \mathbb{R}$ and $2 y_1^2 + 7 y_2^2 = 0$ if and only if $(y_1,y_2) =(0,0).$ Therefore, the given quadratic form is positive definite.

- T4

The above introduced change of coordinates yields

\[

\bigl\{ \mathbf{x} \in \mathbb{R}^2 \, : \, Q(\mathbf{x}) = -1 \bigr\} = \bigl\{ \mathbf{y} \in \mathbb{R}^2 \, : \, 2 y_1^2 + 7 y_2^2 = -1 \bigr\},

\]

(this set is clearly an empty set)

\[

\bigl\{ \mathbf{x} \in \mathbb{R}^2 \, : \, Q(\mathbf{x}) = 0 \bigr\} = \bigl\{ \mathbf{y} \in \mathbb{R}^2 \, : \, 2 y_1^2 + 7 y_2^2 = 0 \bigr\}

\]

(this set clearly consists of the zero vector only)

and

\[

\bigl\{ \mathbf{x} \in \mathbb{R}^2 \, : \, Q(\mathbf{x}) = 1 \bigr\} = \bigl\{ \mathbf{y} \in \mathbb{R}^2 \, : \, 2 y_1^2 + 7 y_2^2 = 1 \bigr\}.

\]

The set

\[

\bigl\{ (y_1,y_2) \in \mathbb{R}^2 \, : \, 2 y_1^2 + 7 y_2^2 = 1 \bigr\}

\]

is an ellipse. The vertices of this ellipse in the coordinate system relative to the basis $\mathcal{B}$ are

\[

\text{vertices}: \left(\frac{\sqrt{2}}{2}, 0 \right) , \ \left(-\frac{\sqrt{2}}{2}, 0 \right), \qquad

\text{co-vertices}: \left(0, \frac{\sqrt{7}}{7} \right) , \ \left(0, -\frac{\sqrt{7}}{7}\right).

\]

To get the coordinates of these points in the original coordinate system relative to the basis $\mathcal{E}$ we apply the change of coordinates matrix $\displaystyle \underset{\mathcal{E}\leftarrow\mathcal{B}}{P}$:

\[

\text{vertices}: \left(\frac{\sqrt{10}}{10},\frac{\sqrt{10}}{5} \right) , \ \left(-\frac{\sqrt{10}}{10}, - \frac{\sqrt{10}}{5} \right),

\]

\[

\text{co-vertices}: \left(-\frac{2 \sqrt{35}}{35}, \frac{\sqrt{35}}{35} \right) , \ \left(\frac{2 \sqrt{35}}{35}, -\frac{\sqrt{35}}{35} \right).

\]

- T3 Since the change of coordinate matrices $\displaystyle \underset{\mathcal{E}\leftarrow\mathcal{B}}{P}$ and $\displaystyle \underset{\mathcal{B}\leftarrow\mathcal{E}}{P}$ are orthogonal we have \begin{align*} \| \mathbf{y} \|^2 & = \mathbf{y}^\top \mathbf{y} \\ & = \left(\underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x}\right)^\top \left(\underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x}\right) \\ & = \mathbf{x}^\top \left(\underset{\mathcal{B}\leftarrow\mathcal{E}}{P}\right)^\top \underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x} \\ & = \mathbf{x}^\top \mathbf{x} = \|\mathbf{x} \|^2. \end{align*} Therefore \begin{align*} S & = \Bigl\{ Q(\mathbf{x}) \, : \, \mathbf{x} \in \mathbb{R}^2, \ \| \mathbf{x} \| = 1 \Bigr\} \\ & = \Bigl\{ 2 y_1^2 + 7 y_2^2 \, : \, y_1^2 + y_2^2 = 1, \ y_1, y_2 \in\mathbb{R} \Bigr\}. \end{align*} Since \[ 2 = 2 y_1^2 + 2 y_2^2 \leq 2 y_1^2 + 7 y_2^2 \leq 7 y_1^2 + 7 y_2^2 = 7 \] whenever $y_1^2 + y_2^2 = 1$, we have that $\min S = 2$ and $\max S = 7$. The form $2 y_1^2 + 7 y_2^2$ takes the value $2$ when $y_1 = 1, y_2 =0$ and $y_1 = -1, y_2 =0$. Using the change of coordinates matrix $\displaystyle \underset{\mathcal{E}\leftarrow\mathcal{B}}{P}$ we conclude that \[ \bigl\{ \mathbf{x} \in \mathbb{R}^2 \, : \, Q(\mathbf{x}) = 2 \bigr\} = \left\{ \left[\! \begin{array}{c} \frac{1}{\sqrt{5}} \\ \frac{2}{\sqrt{5}} \end{array} \!\right], - \left[\! \begin{array}{c} \frac{1}{\sqrt{5}} \\ \frac{2}{\sqrt{5}} \end{array} \!\right] \right\}. \] and \[ \bigl\{ \mathbf{x} \in \mathbb{R}^2 \, : \, Q(\mathbf{x}) = 7 \bigr\} = \left\{ \left[\! \begin{array}{c} \frac{-2}{\sqrt{5}} \\ \frac{1}{\sqrt{5}} \end{array} \!\right], \left[\! \begin{array}{c} \frac{2}{\sqrt{5}} \\ \frac{-1}{\sqrt{5}} \end{array} \!\right] \right\}. \]

-

Example 2.

In this item we consider the quadratic form

\[

Q(x_1,x_2) = x_1^2 + 6 x_2 x_1 + x_2^2 \quad \text{where} \quad x_1, x_2 \in \mathbb{R}.

\]

- T1 We have \[ Q(x_1,x_2) = \bigl[ x_1 \ \ x_2 \bigr] \left[\! \begin{array}{cc} 1 & 3 \\ 3 & 1 \end{array} \!\right] \left[\! \begin{array}{c} x_1 \\ x_2 \end{array} \!\right] = x_1^2 + 6 x_2 x_1 + x_2^2 \quad \text{where} \quad x_1, x_2 \in \mathbb{R}. \]

- T2

Clearly the quadratic form $Q$ is not a zero form. To classify $Q$ as positive semidefinite, negative semidefinite, indefinite we orthogonally diagonalize the matrix of this quadratic form:

\[

\left[\!

\begin{array}{cc}

1 & 3 \\

3 & 1

\end{array}

\!\right] = \left[\!

\begin{array}{cc}

\frac{1}{\sqrt{2}} & -\frac{1}{\sqrt{2}} \\

\frac{1}{\sqrt{2}} & \frac{1}{\sqrt{2}}

\end{array}

\!\right] \left[\!

\begin{array}{cc}

4 & 0 \\

0 & -2

\end{array}

\!\right] \left[\!

\begin{array}{cc}

\frac{1}{\sqrt{2}} & -\frac{1}{\sqrt{2}} \\

\frac{1}{\sqrt{2}} & \frac{1}{\sqrt{2}}

\end{array}

\!\right]^\top

\]

Let us introduce two bases

\[

\mathcal{B} = \left\{ \left[\!

\begin{array}{c}

\frac{1}{\sqrt{2}} \\

\frac{1}{\sqrt{2}}

\end{array}

\!\right], \left[\!

\begin{array}{c}

-\frac{1}{\sqrt{2}} \\

\frac{1}{\sqrt{2}}

\end{array}

\!\right] \right\} \qquad \text{and} \qquad

\mathcal{E} = \left\{ \left[\!

\begin{array}{c} 1 \\ 0 \end{array}

\!\right], \left[\!

\begin{array}{c}

0 \\ 1

\end{array}

\!\right] \right\}.

\]

The above orthogonal diagonalization suggests a very useful change of coordinates:

\[

\mathbf{y} = \left[\!

\begin{array}{cc}

\frac{1}{\sqrt{2}} & -\frac{1}{\sqrt{2}} \\

\frac{1}{\sqrt{2}} & \frac{1}{\sqrt{2}}

\end{array}

\!\right]^\top \mathbf{x} = \underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x}, \qquad

\mathbf{x} = \left[\!

\begin{array}{cc}

\frac{1}{\sqrt{2}} & -\frac{1}{\sqrt{2}} \\

\frac{1}{\sqrt{2}} & \frac{1}{\sqrt{2}}

\end{array}

\!\right] \mathbf{y} = \underset{\mathcal{E}\leftarrow\mathcal{B}}{P} \mathbf{y}.

\]

The coordinates $\mathbf{y}$ are the coordinates relative to the basis $\mathcal{B}$ which consists of the blue vectors in the next image

With the change of coordinates

\[

\mathbf{y} = \underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x}, \qquad

\mathbf{x} = \underset{\mathcal{E}\leftarrow\mathcal{B}}{P} \mathbf{y},

\]

the quadratic form $Q$ simplifies as follows

\[

x_1^2 + 6 x_2 x_1 + x_2^2 = 4 y_1^2 - 2 y_2^2.

\]

Clearly $4 y_1^2 - 2 y_2^2$ is an indefinite form taking the value $4$ at $(y_1,y_2) = (1,0)$ and the value $-2$ at $(y_1,y_2) = (0,1).$

With the change of coordinates

\[

\mathbf{y} = \underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x}, \qquad

\mathbf{x} = \underset{\mathcal{E}\leftarrow\mathcal{B}}{P} \mathbf{y},

\]

the quadratic form $Q$ simplifies as follows

\[

x_1^2 + 6 x_2 x_1 + x_2^2 = 4 y_1^2 - 2 y_2^2.

\]

Clearly $4 y_1^2 - 2 y_2^2$ is an indefinite form taking the value $4$ at $(y_1,y_2) = (1,0)$ and the value $-2$ at $(y_1,y_2) = (0,1).$

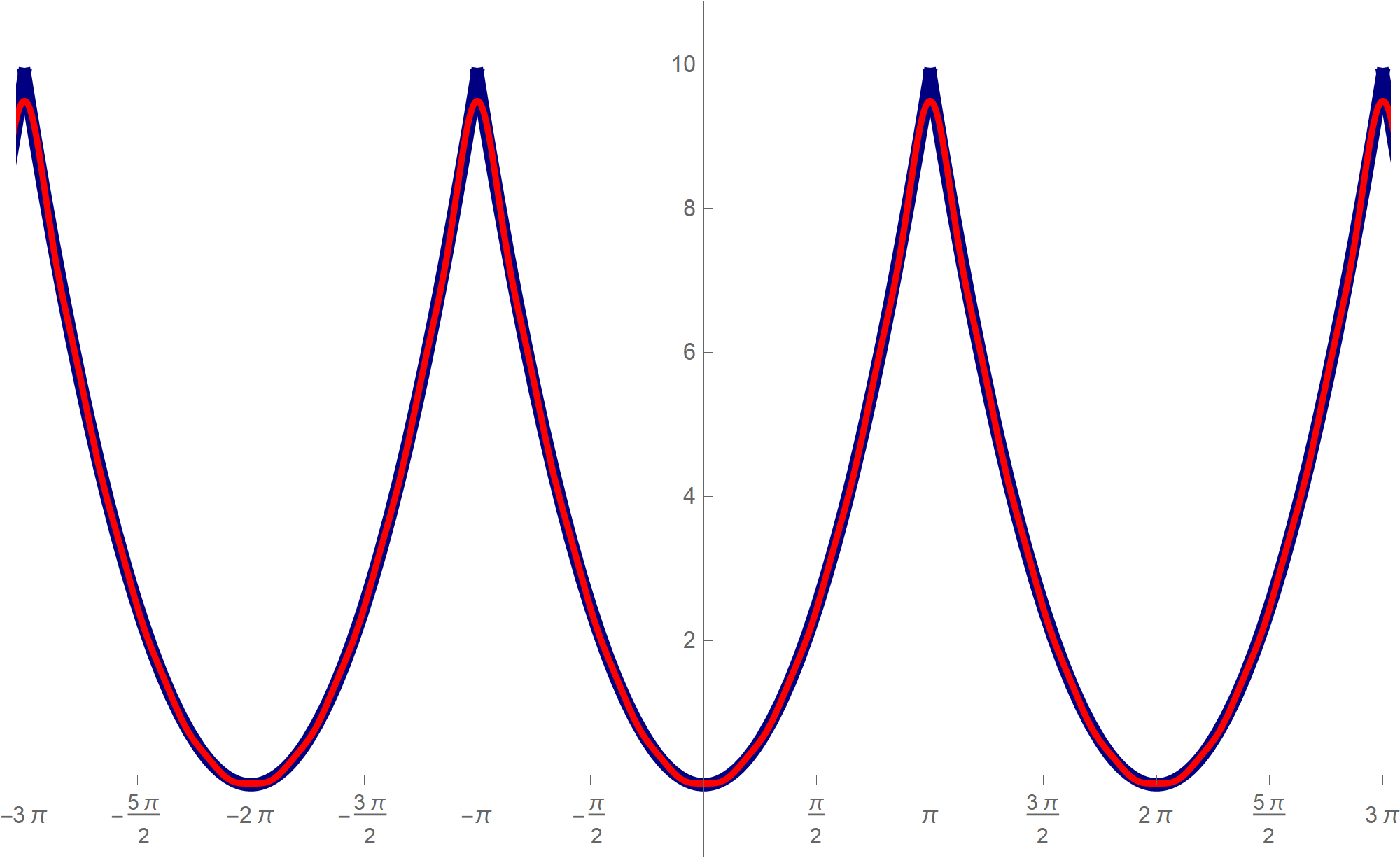

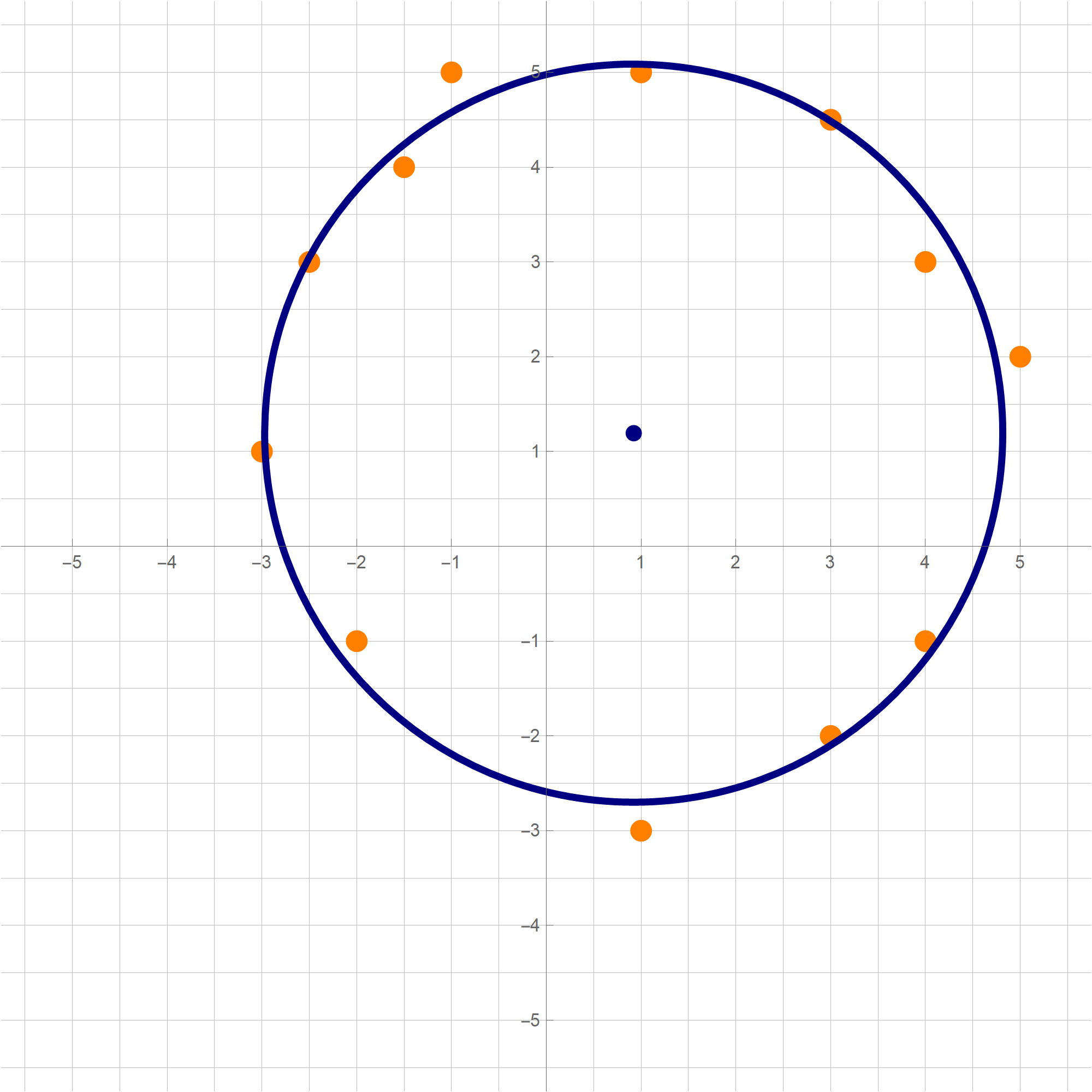

- T4

The above introduced change of coordinates yields

\[

\bigl\{ \mathbf{x} \in \mathbb{R}^2 \, : \, Q(\mathbf{x}) = -1 \bigr\} = \bigl\{ \mathbf{y} \in \mathbb{R}^2 \, : \, 4 y_1^2 - 2 y_2^2 = -1 \bigr\},

\]

\[

\bigl\{ \mathbf{x} \in \mathbb{R}^2 \, : \, Q(\mathbf{x}) = 0 \bigr\} = \bigl\{ \mathbf{y} \in \mathbb{R}^2 \, : \, 4 y_1^2 - 2 y_2^2 = 0 \bigr\}

\]

and

\[

\bigl\{ \mathbf{x} \in \mathbb{R}^2 \, : \, Q(\mathbf{x}) = 1 \bigr\} = \bigl\{ \mathbf{y} \in \mathbb{R}^2 \, : \, 4 y_1^2 - 2 y_2^2 = 1 \bigr\}.

\]

The set

\[

\bigl\{ (y_1,y_2) \in \mathbb{R}^2 \, : \, 4 y_1^2 - 2 y_2^2 = 1 \bigr\}

\]

is a hyperbola. The vertices of this hyperbola in the coordinate system relative to the basis $\mathcal{B}$ are

\[

\text{vertices}: \left(\frac{1}{2}, 0 \right) , \ \left(-\frac{1}{2}, 0 \right)

\]

and the asymptotes of this hyperbola are two lines which are determined by the vectors

\[

\left[\!

\begin{array}{c}

\frac{1}{2} \\ \frac{\sqrt{2}}{2}

\end{array}

\!\right] \qquad \text{and} \qquad \left[\!

\begin{array}{c}

\frac{1}{2} \\ -\frac{\sqrt{2}}{2}

\end{array}

\!\right].

\]

To get the coordinates of the vertices in the original coordinate system relative to the basis

$\mathcal{E}$ we apply the change of coordinates matrix $\displaystyle \underset{\mathcal{E}\leftarrow\mathcal{B}}{P}$:

\[

\text{vertices}: \left(\frac{\sqrt{2}}{4},\frac{\sqrt{2}}{4} \right) , \ \left(-\frac{\sqrt{2}}{4},-\frac{\sqrt{2}}{4} \right),

\]

and the asymptotes are determined by the vectors

\[

\left[\!

\begin{array}{c}

\frac{-2+\sqrt{2}}{4} \\ \frac{2+\sqrt{2}}{4}

\end{array}

\!\right] \qquad \text{and} \qquad \left[\!

\begin{array}{c}

\frac{2+\sqrt{2}}{4} \\ \frac{-2+\sqrt{2}}{4}

\end{array}

\!\right].

\]

The set

\[

\bigl\{ (y_1,y_2) \in \mathbb{R}^2 \, : \, 4 y_1^2 - 2 y_2^2 = 0 \bigr\}

\]

is a union of two lines that go through the origin. These lines are the asymptotes of the preceding hyperbola and are determined by the vectors

\[

\left[\!

\begin{array}{c}

\frac{1}{2} \\ \frac{\sqrt{2}}{2}

\end{array}

\!\right] \qquad \text{and} \qquad \left[\!

\begin{array}{c}

\frac{1}{2} \\ -\frac{\sqrt{2}}{2}

\end{array}

\!\right],

\]

in the coordinates relative to the basis $\mathcal{B}$. To get the coordinates in the original coordinate system relative to the basis $\mathcal{E}$ we apply the change of coordinates matrix $\displaystyle \underset{\mathcal{E}\leftarrow\mathcal{B}}{P}$:

\[

\left[\!

\begin{array}{c}

\frac{-2+\sqrt{2}}{4} \\ \frac{2+\sqrt{2}}{4}

\end{array}

\!\right] \qquad \text{and} \qquad \left[\!

\begin{array}{c}

\frac{2+\sqrt{2}}{4} \\ \frac{-2+\sqrt{2}}{4}

\end{array}

\!\right].

\]

The set

\[

\bigl\{ (y_1,y_2) \in \mathbb{R}^2 \, : \, 4 y_1^2 - 2 y_2^2 = -1 \bigr\}

\]

is a hyperbola. The vertices of this hyperbola in the coordinate system relative to the basis $\mathcal{B}$ are

\[

\text{vertices}: \left(0, \frac{\sqrt{2}}{2}\right) , \ \left(0, -\frac{\sqrt{2}}{2} \right)

\]

and the asymptotes of this hyperbola are two lines which are determined by the vectors

\[

\left[\!

\begin{array}{c}

\frac{1}{2} \\ \frac{\sqrt{2}}{2}

\end{array}

\!\right] \qquad \text{and} \qquad \left[\!

\begin{array}{c}

\frac{1}{2} \\ -\frac{\sqrt{2}}{2}

\end{array}

\!\right].

\]

To get the coordinates of the vertices in the original coordinate system relative to the basis $\mathcal{E}$ we apply the change of coordinates matrix $\displaystyle \underset{\mathcal{E}\leftarrow\mathcal{B}}{P}$:

\[

\text{vertices}: \left(-\frac{1}{2},\frac{1}{2} \right) , \ \left(\frac{1}{2}, -\frac{1}{2} \right),

\]

and the asymptotes are determined by the vectors

\[

\left[\!

\begin{array}{c}

\frac{-2+\sqrt{2}}{4} \\ \frac{2+\sqrt{2}}{4}

\end{array}

\!\right] \qquad \text{and} \qquad \left[\!

\begin{array}{c}

\frac{2+\sqrt{2}}{4} \\ \frac{-2+\sqrt{2}}{4}

\end{array}

\!\right].

\]

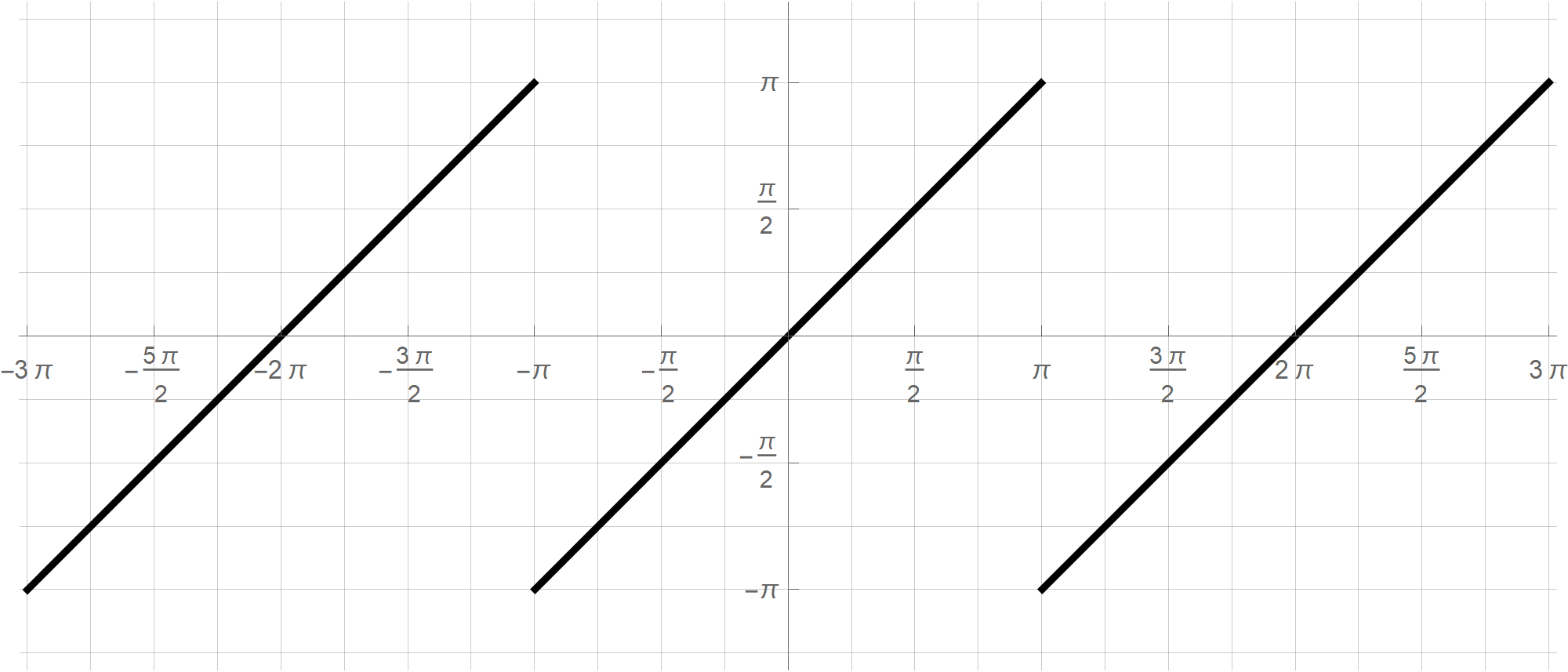

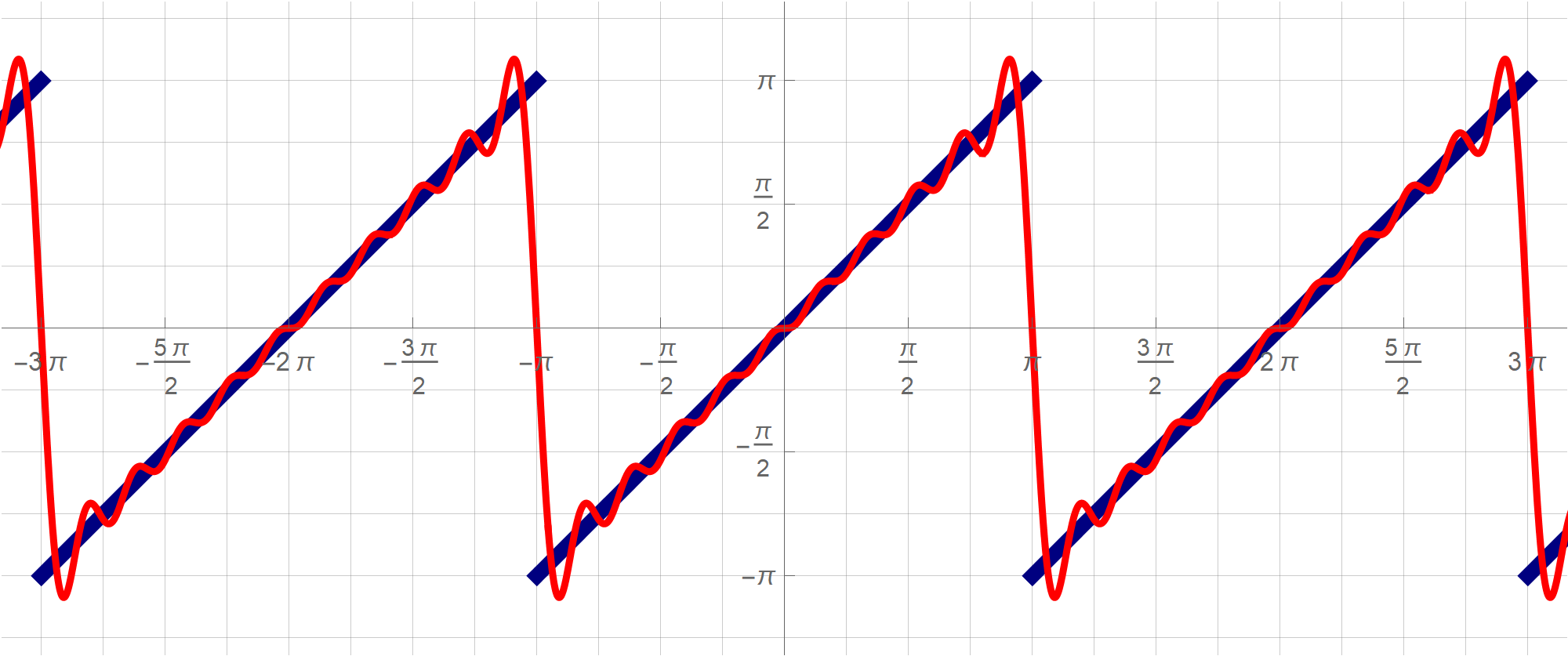

- T3 Since the change of coordinate matrices $\displaystyle \underset{\mathcal{E}\leftarrow\mathcal{B}}{P}$ and $\displaystyle \underset{\mathcal{B}\leftarrow\mathcal{E}}{P}$ are orthogonal we have \begin{align*} \| \mathbf{y} \|^2 &= \mathbf{y}^\top \mathbf{y} \\ & = \left(\underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x}\right)^\top \left(\underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x}\right) \\ & = \mathbf{x}^\top \left(\underset{\mathcal{B}\leftarrow\mathcal{E}}{P}\right)^\top \underset{\mathcal{B}\leftarrow\mathcal{E}}{P} \mathbf{x} \\ & = \mathbf{x}^\top \mathbf{x} = \|\mathbf{x} \|^2. \end{align*} Therefore \begin{align*} S &= \Bigl\{ Q(\mathbf{x}) \, : \, \mathbf{x} \in \mathbb{R}^2, \ \| \mathbf{x} \| = 1 \Bigr\} \\ & = \Bigl\{ 4 y_1^2 - 2 y_2^2 \, : \, y_1^2 + y_2^2 = 1, \ y_1, y_2 \in\mathbb{R} \Bigr\}. \end{align*} Since \[ -2 = -2 y_1^2 - 2 y_2^2 \leq 4 y_1^2 - 2 y_2^2 \leq 4 y_1^2 + 4 y_2^2 = 4 \] whenever $y_1^2 + y_2^2 = 1$, we have that $\min S = -2$ and $\max S = 4$. The form $4 y_1^2 - 2 y_2^2$ takes the value $-2$ when $y_1 = 0, y_2 = 1$ or $y_1 = 0, y_2 =-1$ and the value $4$ when $y_1 = 1, y_2 = 0$ or $y_1 = -1, y_2 =0$. Using the change of coordinates matrix $\displaystyle \underset{\mathcal{E}\leftarrow\mathcal{B}}{P}$ we conclude that \[ \bigl\{ \mathbf{x} \in \mathbb{R}^2 \, : \, Q(\mathbf{x}) = -2 \bigr\} = \left\{ \left[\! \begin{array}{c} -\frac{1}{\sqrt{2}} \\ \frac{1}{\sqrt{2}} \end{array} \!\right], \left[\! \begin{array}{c} \frac{1}{\sqrt{2}} \\ -\frac{1}{\sqrt{2}} \end{array} \!\right] \right\}. \] and \[ \bigl\{ \mathbf{x} \in \mathbb{R}^2 \, : \, Q(\mathbf{x}) = 4 \bigr\} = \left\{ \left[\! \begin{array}{c} \frac{1}{\sqrt{2}} \\ \frac{1}{\sqrt{2}} \end{array} \!\right], \left[\! \begin{array}{c} -\frac{1}{\sqrt{2}} \\ -\frac{1}{\sqrt{2}} \end{array} \!\right] \right\}. \] This is illustrated in the picture below.

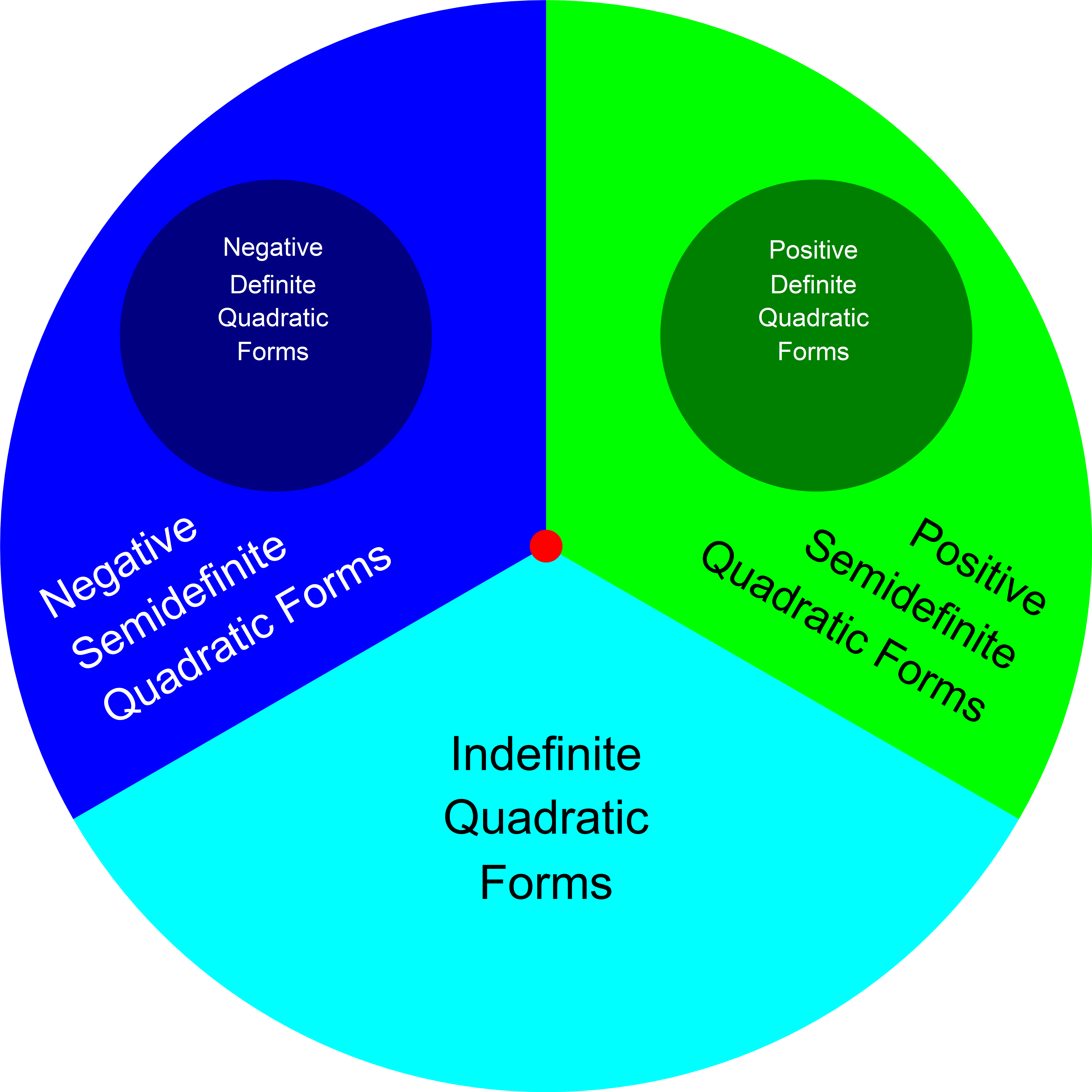

- By far the most popular quadratic form in any dimension \(n \in \mathbb{N}\) is the "dot square": \[ Q(\mathbf{x}) = x_1x_1 + \cdots + x_n x_n, \quad \mathbf{x} = \left[\!\begin{array}{c} x_1 \\ \vdots \\ x_n \end{array}\!\right] \in \mathbb{R}^n. \]

-

The second most popular quadratic form in dimension \(4\) is the determinant of \(2\times 2\) matrices. Here we identify a matrix \(\displaystyle \left[\!\begin{array}{cc} a & b \\ c & d \end{array}\!\right]\) with the vector \(\displaystyle \left[\!\begin{array}{c} a \\ b \\ c \\ d \end{array}\!\right]\) in \(\mathbb{R}^4\). The corresponding form is

\[

Q(\mathbf{x}) = x_1x_4 - x_2 x_3, \quad \mathbf{x} = \left[\!\begin{array}{c} x_1 \\ x_2 \\ x_3 \\ x_4 \end{array}\!\right] \in \mathbb{R}^n.

\]

- Let us analyze this form. First, identify the \(4\times 4\) matrix \(A\) such that \[ x_1x_4 - x_2 x_3 = \left[\!\begin{array}{c} x_1 \\ x_2 \\ x_3 \\ x_4 \end{array} \!\right]^\top \left[\!\begin{array}{cccc} \Box & \Box & \Box & \Box \\ \Box & \Box & \Box & \Box \\ \Box & \Box & \Box & \Box \\ \Box & \Box & \Box & \Box \end{array} \!\right] \left[\!\begin{array}{c} x_1 \\ x_2 \\ x_3 \\ x_4 \end{array} \!\right]. \] It is quite clear that \[ x_1x_4 - x_2 x_3 = \left[\!\begin{array}{c} x_1 \\ x_2 \\ x_3 \\ x_4 \end{array} \!\right]^\top \left[\!\begin{array}{cccc} 0 & 0 & 0 & \tfrac{1}{2} \\ 0 & 0 & -\tfrac{1}{2} & 0 \\ 0 & -\tfrac{1}{2} & 0 & 0 \\ \tfrac{1}{2} & 0 & 0 & 0 \end{array} \!\right] \left[\!\begin{array}{c} x_1 \\ x_2 \\ x_3 \\ x_4 \end{array} \!\right]. \]

- Thus \[ A = \left[\!\begin{array}{cccc} 0 & 0 & 0 & \tfrac{1}{2} \\ 0 & 0 & -\tfrac{1}{2} & 0 \\ 0 & -\tfrac{1}{2} & 0 & 0 \\ \tfrac{1}{2} & 0 & 0 & 0 \end{array} \!\right] = \frac{1}{2} \left[\!\begin{array}{cccc} 0 & 0 & 0 & 1 \\ 0 & 0 & -1 & 0 \\ 0 & -1 & 0 & 0 \\ 1 & 0 & 0 & 0 \end{array} \!\right]. \] The characteristic polynomial of \(A\) is \begin{align*} \left|\!\begin{array}{cccc} -\lambda & 0 & 0 & \tfrac{1}{2} \\ 0 & -\lambda & -\tfrac{1}{2} & 0 \\ 0 & -\tfrac{1}{2} & -\lambda & 0 \\ \tfrac{1}{2} & 0 & 0 & -\lambda \end{array} \!\right| & = \left|\!\begin{array}{cccc} 0 & 0 & 0 & \tfrac{1}{2} - 2 \lambda^2 \\ 0 & -\lambda & -\tfrac{1}{2} & 0 \\ 0 & -\tfrac{1}{2} & -\lambda & 0 \\ \tfrac{1}{2} & 0 & 0 & -\lambda \end{array} \!\right| \\ & = - \left( \frac{1}{2} - 2 \lambda^2\right) \left|\!\begin{array}{ccc} 0 & -\lambda & -\tfrac{1}{2} \\ 0 & -\tfrac{1}{2} & -\lambda \\ \tfrac{1}{2} & 0 & 0 \end{array} \!\right| \\ & = - \frac{1}{2} \left( \frac{1}{2} - 2 \lambda^2\right) \left|\!\begin{array}{cc} -\lambda & -\tfrac{1}{2} \\ -\tfrac{1}{2} & -\lambda \end{array} \!\right| \\ & = - \left( \frac{1}{4} -\lambda^2\right) \left( \lambda^2 - \frac{1}{4}\right) \\ & = \left( \lambda^2 - \frac{1}{4}\right)^2. \end{align*} Hence the eigenvalues of \(A\) are \[ \lambda_1 = -\frac{1}{2}, \quad \lambda_2 = \frac{1}{2}, \] both of multiplicity \(2\).

- Let us find the eigenvectors of \(A\). We use the method of guessing. Eigenvectors corresponding to \(\lambda_2 = \frac{1}{2}\): \[ \frac{1}{2} \left[\!\begin{array}{cccc} 0 & 0 & 0 & 1 \\ 0 & 0 & -1 & 0 \\ 0 & -1 & 0 & 0 \\ 1 & 0 & 0 & 0 \end{array} \!\right] \left[\!\begin{array}{c}1 \\ 0 \\ 0 \\ 1 \end{array} \!\right] = \frac{1}{2} \left[\!\begin{array}{c} 1 \\ 0 \\ 0 \\ 1 \end{array} \!\right], \quad \frac{1}{2} \left[\!\begin{array}{cccc} 0 & 0 & 0 & 1 \\ 0 & 0 & -1 & 0 \\ 0 & -1 & 0 & 0 \\ 1 & 0 & 0 & 0 \end{array} \!\right] \left[\!\begin{array}{c} 0 \\ -1 \\ 1 \\ 0 \end{array} \!\right] = \frac{1}{2} \left[\!\begin{array}{c} 0 \\ -1 \\ 1 \\ 0 \end{array} \!\right]. \] Eigenvectors corresponding to \(\lambda_2 = -\frac{1}{2}\): \[ \frac{1}{2} \left[\!\begin{array}{cccc} 0 & 0 & 0 & 1 \\ 0 & 0 & -1 & 0 \\ 0 & -1 & 0 & 0 \\ 1 & 0 & 0 & 0 \end{array} \!\right] \left[\!\begin{array}{c} 0 \\ 1 \\ 1 \\ 0 \end{array} \!\right] = -\frac{1}{2} \left[\!\begin{array}{c} 0 \\ 1 \\ 1 \\ 0 \end{array} \!\right], \quad \frac{1}{2} \left[\!\begin{array}{cccc} 0 & 0 & 0 & 1 \\ 0 & 0 & -1 & 0 \\ 0 & -1 & 0 & 0 \\ 1 & 0 & 0 & 0 \end{array} \!\right] \left[\!\begin{array}{c}1 \\ 0 \\ 0 \\ -1 \end{array} \!\right] = -\frac{1}{2} \left[\!\begin{array}{c} 1 \\ 0 \\ 0 \\ -1 \end{array} \!\right]. \]